Product discovery has never mattered more. And most companies are skipping it entirely.

Marty Cagan has been saying this for years. In his 2024 book Transformed, he makes the case that the majority of organizations operate in delivery mode. They ship roadmaps of features. They execute what stakeholders asked for. They never stop to ask whether they are solving the right problem. His core principle is blunt: your customers and your stakeholders are not able to tell you what to build.

Discovery - Delivery - Silicon Valley Product Group

That was already true before AI.

Now it is critical.

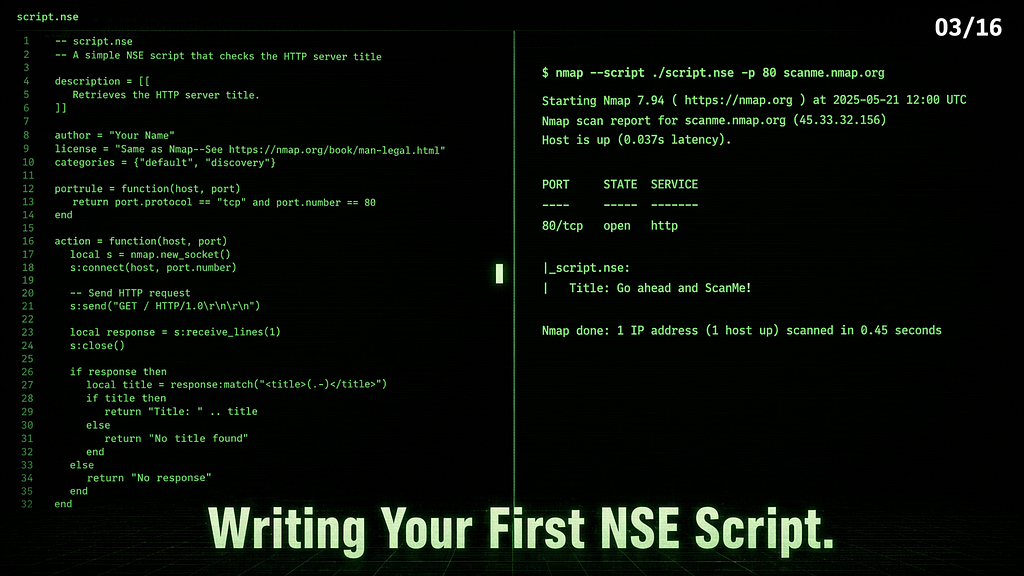

Because AI has made the delivery side almost free. Andrej Karpathy coined “vibe coding” in February 2025 to describe what happens when you let AI generate code without reading it, without reviewing it, without understanding it. A year later, he walked it back himself. His latest project, Nanochat, was entirely hand-written. He tried AI agents on it and found them, in his own words, “net unhelpful.” He now prefers the term “agentic engineering” to describe what serious work actually looks like: orchestrating agents with oversight, discipline, and taste.

Inventor of Vibe Coding Admits He Hand-Coded His New Project

The implication is clear. When building is cheap, knowing what to build becomes everything. And that is a discovery problem, not a technology problem.

The use case catalog is the most abstract approach there is

Here is the pattern I see everywhere. A company decides it needs to “do AI.” Leadership asks the team, or worse, asks a consulting firm, to identify “concrete use cases.” A catalog is produced. Pilots are launched. Demos impress. Then nothing scales.

The numbers confirm this at industrial scale. BCG surveyed over a thousand C-level executives in 2024 and found that 74% of companies struggle to generate any tangible value from AI. Only 26% manage to scale beyond the pilot stage. By September 2025, BCG updated those numbers and the situation had worsened: 60% generate no material value despite continued investment, and only 5% create substantial value at scale.

Let that sink in. The gap is widening, not closing.

AI Adoption in 2024: 74% of Companies Struggle to Achieve and Scale Value

The same BCG research identifies what separates the 5% from the rest. It is not better algorithms. It is not bigger budgets. It is what they call the 10–20–70 principle: AI success is 10% algorithms, 20% data and technology, 70% people, processes, and cultural transformation. The leaders who win, in BCG’s words, “fundamentally redesign workflows.” The laggards try to automate old, broken processes.

So when a company starts its AI journey by browsing a catalog of use cases, it is starting with the 10%. It is skipping the 70% that actually determines whether anything will work. That is not pragmatic. That is the most abstract approach imaginable.

The real work: map your process, then challenge it

Ethan Mollick is a professor at Wharton and one of the most grounded voices on AI adoption. He ran a large-scale experiment with BCG, had 8% of their global workforce use AI on realistic business tasks, and measured the results: 40% improvement in quality, 26% faster, 12.5% more work done.

Principles for Using AI at Work - Coaching for Leaders

But here is the part that matters more than the numbers. During a talk at Stanford, Mollick said something that should be printed and taped to every boardroom wall: “OpenAI doesn’t have any secret knowledge you don’t have. They have never thought about how this could be useful for your work. No one has tested it. You need to figure this out yourself, and the only way to figure it out is disciplined experimentation.”

Co-Intelligence: An AI Masterclass with Ethan Mollick

Nobody is going to hand you your use case. Not OpenAI. Not McKinsey. Not the vendor selling you a platform. The only people who can discover where AI creates real value in your organization are the people who actually do the work. And the prerequisite for that discovery is brutally simple: you need to know your own processes first. If you cannot describe what you are doing as a process, you cannot challenge it, and you certainly cannot automate it.

Andrew Ng frames this the same way. At AI Startup School in June 2025, he described the core skill behind successful AI projects as “breaking complex tasks into smaller, solvable steps.” That is not a machine learning insight. That is process decomposition. And it requires domain knowledge that no model and no consultant can substitute.

Andrew Ng: Building Faster with AI (Transcript)

Why you need help, but not the kind you think

Most companies do not have an AI problem. They have an agency problem.

Mollick identified this pattern and gave it a name: “secret cyborgs.” More than 50% of Americans say they use AI at work. Many of them report three times performance improvement on a fifth of their tasks. But they hide it. Because their organization has built elaborate rules focused on negative use cases, employees are too scared to share what they have learned.

https://medium.com/media/a1c5d2a122d93c6b6281d2d78fa517b5/hrefMollick’s prescription is structural: you need three things. Leadership that actively incentivizes experimentation. A lab where the best internal practitioners can turn discoveries into shared tools. And a crowd, meaning everyone in the organization, given permission to experiment.

Shreyas Doshi, who built and scaled products at Stripe and Twitter, calls this high agency. His definition is precise: “High Agency is about finding a way to get what you want, without waiting for conditions to be perfect or otherwise blaming the circumstances.” In his experience, highly talented people with low agency end up capitulating to the system. It is not talent that is missing. It is the organizational permission to act.

This is where external help actually matters. Not to hand you a catalog. Not to choose your use case for you. External help matters when it does three things: it forces you to map your processes honestly, it transfers the competence to challenge those processes with AI, and it builds the internal muscle so your teams can keep going without you.

If your external partner leaves and takes the knowledge with them, you have not been helped. You have been rented.

Leadership is the bottleneck

Cagan says it plainly: “The largest barrier is trust. It requires real trust for executives and stakeholders to empower others to take responsibility for solving critical problems.” Transformation is not a technology purchase. It is progressively earning that trust by showing results, one team at a time.

The companies that win with AI are not the ones with the best models or the biggest budgets. They are the ones where a leader decided to stop waiting for the perfect use case, mapped the actual work, gave teams the agency to experiment, and invested in the 70% that everyone else ignores.

BCG’s data shows the reward: AI leaders achieve 1.5 times higher revenue growth and 1.6 times greater shareholder returns over three years. And they plan to invest 64% more of their IT budget on AI than the laggards. The gap compounds.

There is no magic wand on any shelf. There is only your process, your people, and the decision to actually look at both honestly.

Stop searching for concrete use cases. Start with what you already do. That is where the value is.

Stop Searching for “Concrete Use Cases” of AI. Find Yours. was originally published in Level Up Coding on Medium, where people are continuing the conversation by highlighting and responding to this story.