I Tried Fine-Tuning an LLM on Blockchain Data With Just 30 Examples — And It Failed Spectacularly

Most AI tutorials show the perfect demo. This one doesn’t.

Akpan Daniel5 min read·Just now

Akpan Daniel5 min read·Just now--

344,064 trainable parameters.

77 million total.

10 epochs.

3 minutes on a free Google Colab GPU.

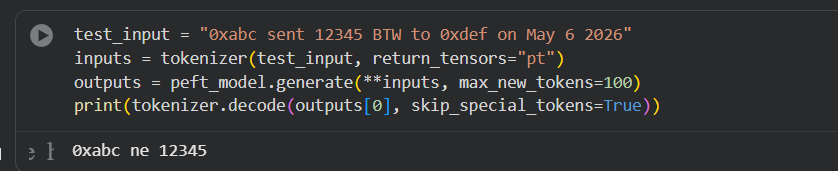

And after all that training, the model gave me this:

“0xabc ne 12345”

That was it.

No JSON.

No structured output.

Just broken nonsense.

And weirdly enough, that’s when the experiment started getting interesting.

Because most people online talk about AI like it’s magic now, fine-tune a model, throw “AI-powered” on the landing page, post a screenshot, and collect engagement.

But when you actually sit down and build this stuff yourself, you realize something fast:

AI doesn’t “understand” your problem.

It pattern matches.

And if the patterns are weak, messy, or too small, the model falls apart instantly.

That contradiction gets overlooked a lot in AI conversations right now.

People think the hard part is accessing the model.

It’s not.

The hard part is getting clean enough signals for the model to learn something useful.

That’s what this experiment taught me.

Why I Even Built This

I’ve spent years around crypto, wallets, on-chain tools, and blockchain analytics.

One thing that always stood out to me is how messy blockchain data really is.

People outside web3 think on-chain data is “clean” because everything is transparent.

It’s actually the opposite.

Raw transaction logs are chaos.

Wallet addresses.

Token symbols.

Contract calls.

Gas values.

Random formatting everywhere.

And yet people build billion-dollar analytics platforms on top of that mess.

So I wanted to test something simple:

Could a small language model learn how to turn messy blockchain-style transaction text into structured JSON?

Something like this:

Input:

0xabc sent 12345 BTW to 0xdef on May 6 2026Desired output:

{

"token": "BTW",

"amount": 12345,

"from": "0xabc",

"to": "0xdef",

"date": "May 6 2026"

}Simple idea.

But the real-world use cases are actually pretty big:

- whale tracking

- compliance systems

- fraud monitoring

- wallet alerts

- automated reporting

- transaction intelligence

That’s where things get interesting.

Not “AI girlfriend” apps.

Not another chatbot wrapper.

Actual infrastructure problems.

The Setup Was Intentionally Cheap

I didn’t use expensive GPUs.

Didn’t rent servers.

Didn’t spend money.

I used:

- FLAN-T5-small

- LoRA

- free Google Colab T4 GPU

That’s it.

Honestly, that’s one of the craziest parts about AI right now.

Five years ago, this kind of experimentation would’ve needed serious hardware.

Now, anybody with patience and WiFi can test ideas like this from a browser tab.

I used LoRA because I didn’t want to retrain the full model.

The easiest way to explain LoRA is this:

Imagine a textbook.

Full fine-tuning rewrites the entire book.

LoRA adds sticky notes in important places.

The original model stays mostly untouched while tiny adapter layers learn the new task.

Out of 77 million parameters, only about 344k were trainable.

That’s less than 0.5%.

And the full training run took around 3 minutes.

That part actually worked beautifully.

Then Reality Hit

Here’s the part most AI posts skip.

My dataset had only 30 examples.

Yeah… thirty.

I knew it was small.

But I wasn’t trying to build a production model yet.

I just wanted to validate the workflow first:

- tokenization

- training loop

- inference

- LoRA setup

- GPU memory

- outputs

Basically:

“Can this pipeline even run properly?”

Because if it breaks at 30 examples, it’ll definitely break at 5,000.

Training itself looked fine.

Loss slowly dropped:

- 11.05

- 11.02

- 10.98

Tiny improvement.

Nothing dramatic.

Then I tested inference on a new example.

And the model responded with:

“0xabc ne 12345”

I stared at the screen for like 10 seconds thinking:

“Seriously?”

But after the frustration wore off, the result actually made sense.

The model wasn’t “stupid.”

It just didn’t have enough signal.

That’s the part people underestimate with AI.

Models don’t magically become smart because you fine-tuned them.

If your data is weak, your outputs will be weak too.

AI reflects the quality of the patterns you feed it.

That applies way beyond machine learning, honestly.

The Part That Changed My Thinking

Before running this experiment, I thought the difficult part would be the model itself.

Turns out the model was the easy part.

The hard part is data quality.

That’s the real bottleneck nobody talks about enough.

You see companies saying:

“We’re building AI-powered systems.”

Cool.

But if the underlying data is inconsistent, duplicated, noisy, or tiny, the AI layer doesn’t save you.

It amplifies the mess.

That’s true in crypto.

It’s true in analytics.

It’s true in customer support systems.

It’s true almost everywhere.

And honestly, that’s why I think a lot of AI products today feel impressive in demos but weak in production.

The infrastructure underneath usually isn’t mature enough yet.

Why I Still Consider This a Win

The output failed.

But the pipeline didn’t.

And that matters more than people think.

By the end of the experiment, I had:

- working LoRA adapters

- successful training

- clean inference pipeline

- functioning tokenization

- GPU optimization

- reproducible workflow

That’s a real foundation.

Now imagine replacing 30 fake examples with thousands of real on-chain transactions

Now we’re talking about something useful.

That’s where this starts becoming infrastructure instead of experimentation.

What’s Next

I’ve already started pulling real transaction logs from Etherscan for the next version.

The goal now is:

- larger dataset

- better formatting

- stronger base model

- cleaner structured outputs

And honestly, I’m glad the first version failed publicly.

It forced me to understand the system instead of just celebrating screenshots.

That’s something I think a lot of builders in AI skip right now.

The internet rewards polished demos.

But real learning usually happens in the ugly outputs.

And sometimes the broken result teaches you more than the perfect one ever could.