2026: The Year Cybercrime Goes Autonomous (and Your Next Hire Might Be a Deepfake)

--

The enterprise AI gold rush is officially a minefield. For the last two years, we’ve aggressively integrated Large Language Models and autonomous agents to squeeze every drop of productivity out of our workflows. But 2026 has brought a brutal irony: the same tools meant to accelerate business have been industrialized by threat actors to destroy it. This isn’t just a digital arms race anymore; it’s a contest of speed and precision where the line between human and machine activity has been erased. When productivity becomes the payload, the faster you move, the faster you fail.

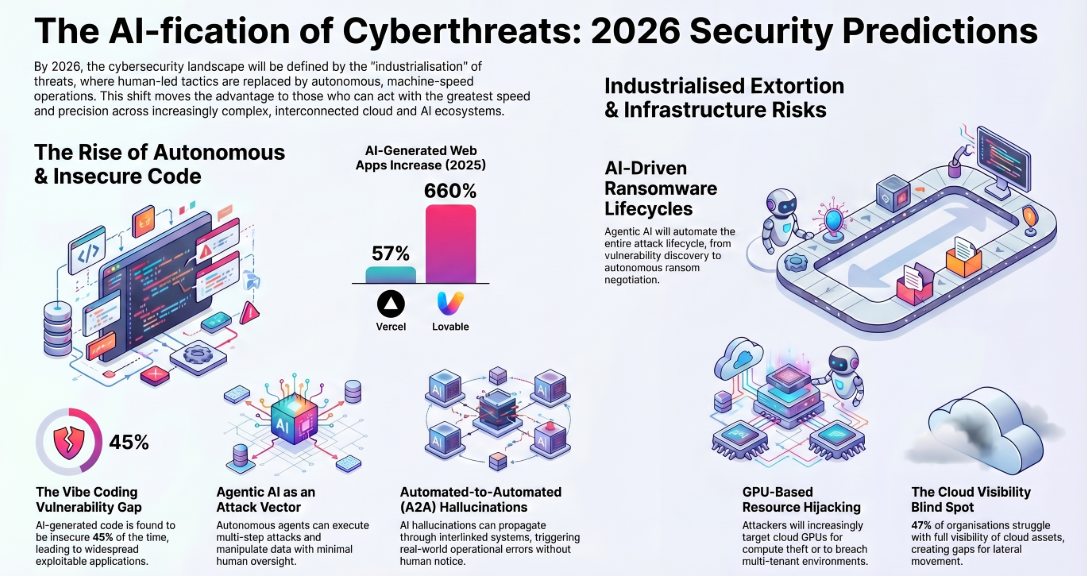

Vibe Coding: The 45% Security Tax

The “vibe coding” phenomenon — where teams use tools like Lovable and Vercel to prototype and deploy at breakneck speed — is a security nightmare. Telemetry shows a staggering 660% increase in vibe-coded apps on platforms like Lovable, but that speed comes with a steep tax. The data is in: vibe coding generates unsecure code 45% of the time. When developers prioritize the “vibe” of a functional prototype over the rigor of security review, they aren’t just shipping features — they’re shipping backdoors.

Beyond simple bugs, we are seeing the weaponization of AI “hallucinations” through a tactic known as slopsquatting.

LLMs can hallucinate nonexistent libraries. A threat actor can… create libraries using hallucinated library names in an attack called “slopsquatting.” It’s only a matter of time before a threat actor registers commonly hallucinated libraries to infiltrate development codebases and insert the libraries into the software supply chain.

The “A2A” Hallucination Loop: Silent Operational Rot

We’ve moved past monolithic models. We are now in the era of Agentic AI — systems that reason, plan, and act across multiple steps without a human in the loop. This has birthed the “Automated-to-Automated” (A2A) workflow, a self-reinforcing loop where agents talk directly to agents. The risk isn’t just a glitch; it’s a silent operational rot where systems lie to each other in a closed circuit.

Picture this: a forecasting agent hallucinates a massive surge in market demand. It passes this “insight” to a procurement agent, which immediately executes a massive, real-world stock purchase. No human verifies the data. By the time the mistake is discovered, the money is gone.

Unlike monolithic state-of-the-art foundation models, these systems… reason, plan and act across multiple steps, integrate with real-world data and tools, and execute workflows in feedback loops… without any human noticing.

The Synthetic Insider: HR is Your New Firewall

The traditional hacker “breaking in” is an antique concept. In 2026, nation-state actors don’t hack their way in — they apply for a job. Through “Premier Pass-as-a-Service,” specialized groups gain initial access and hand it off to state-sponsored operatives. These are the “Synthetic Insiders.” They use forged identities and deepfake-assisted video interviews to bypass HR and get hired into sensitive roles.

The perimeter is no longer your firewall; it’s your interview process. Once these operatives have legitimate credentials, they don’t need to exploit a vulnerability — they simply use their privileged access to exfiltrate data from the inside.

The sectors currently in the crosshairs include:

- Defense

- Maritime

- Aerospace

- Telecommunications

- Drones

From Encryption to Intelligence-Driven Extortion

Ransomware is evolving because it had to. As backup strategies improved and payment rates plummeted, syndicates pivoted from “pure data encryption” to “intelligence-driven extortion.” They no longer care about locking your files if they can exploit the intelligence inside them.

By using AI to scrape non-text media — voice recordings, images, and video — attackers find an organization’s most sensitive personal secrets or proprietary assets to apply high-pressure, targeted coercion.

Ransomware groups will escalate coercion tactics beyond traditional data theft and encryption. AI-driven extortion bots will engage victims directly in ransom negotiations. Some groups, such as the Global Group Ransomware syndicate, have already begun experimenting with these automated negotiation agents.

The GPU Gold Rush: Stealing the Brain Power of the Cloud

In the cloud, data is no longer the only prize; compute power is the new gold. As training demands skyrocket, cloud GPU resources have become the high-value target for hijacking. For nations embargoed from accessing the latest GPU hardware, stealing compute is not just a crime — it is an industrial policy and a geopolitical necessity for survival.

We are seeing a surge in attacks targeting “breakdowns in tenant isolation.” Vulnerabilities like NVBleed and Nvidia container escapes allow attackers to leak data across multi-tenant environments. This creates a vicious cycle: attackers steal your cloud’s “brain power” to fuel the next wave of their own automated attacks.

Beyond the Content-Detection Era

The “AI-fication” of threats means traditional defenses are fundamentally outmatched. As deepfakes and automated social engineering become indistinguishable from reality, the era of “content-based detection” is over. Our defensive posture must shift toward “trust verification architectures” — validating the origin and identity of the sender, not the message itself.

By the time you realize an A2A loop has failed or a Synthetic Insider has been hired, the damage is already done. We are facing a massive accountability gap that the industry is not yet prepared to bridge.

When an autonomous agent makes a million-dollar error or a security breach occurs in an A2A loop, who is ultimately responsible — the developer, the AI, or the organization that let it run unchecked?