Why Privacy Systems Fail at the Edges, Not Only in Circuits

Lucia Romano6 min read·Just now

Lucia Romano6 min read·Just now--

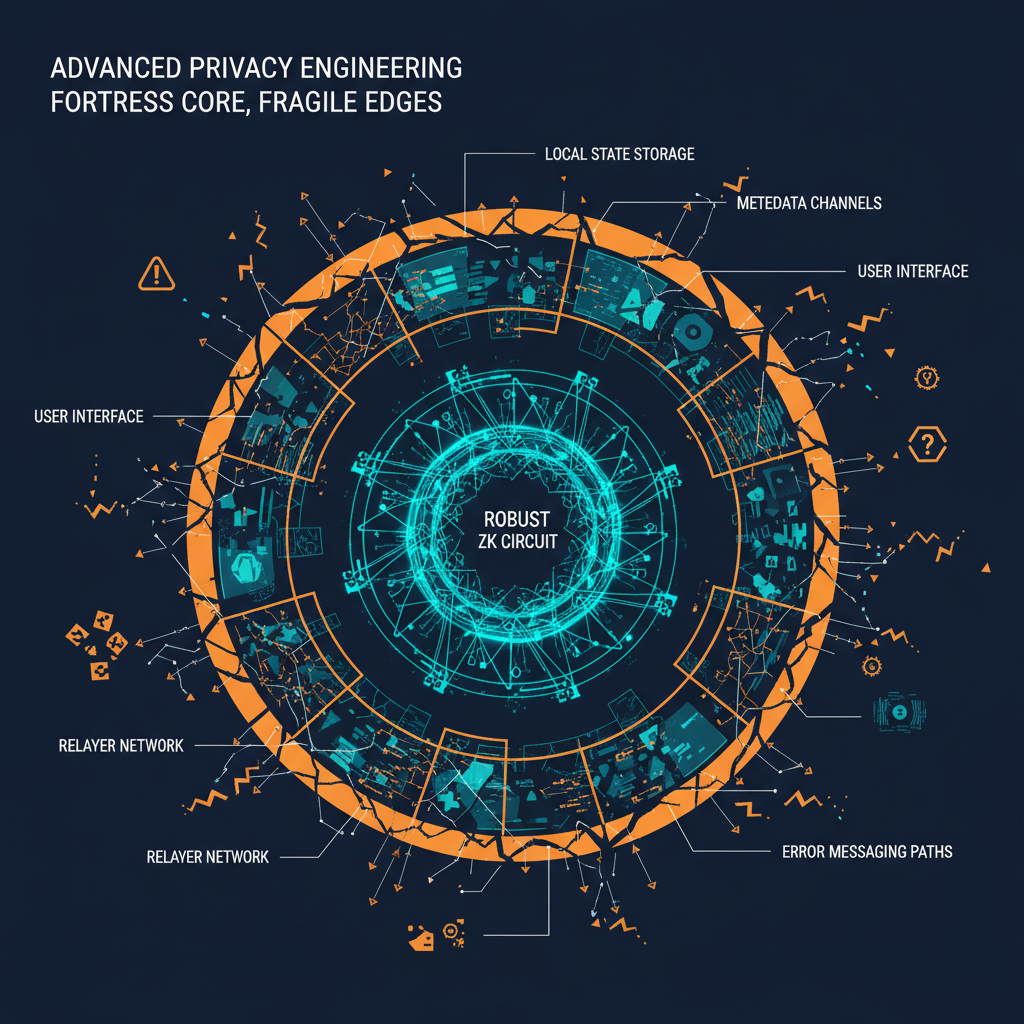

When engineers talk about privacy systems, we often start with the cryptography.

That is natural. The proof system is the interesting part. The circuit defines what can be proven. The commitment scheme defines what can be hidden. The Merkle tree, nullifier, witness, and verifier create the clean mathematical story.

But production privacy systems rarely fail only where the math is written.

They fail at the edges.

They fail where proofs meet interfaces, where notes meet user habits, where relayers meet network conditions, where metadata leaks through ordinary behavior, and where technically correct systems become operationally confusing.

A circuit can be correct while the workflow around it is fragile.

That distinction matters more than most postmortems admit.

Cryptographic Correctness Is Not Product Safety

A proof can verify exactly what it is supposed to verify.

The user can still make a mistake that destroys their ability to use the system safely.

This is not a criticism of cryptography. It is a reminder that cryptography is one layer in a larger system.

In a privacy application, the circuit might prove membership, prevent double-spending, and hide the link between two events. But the user still has to store local data, choose a timing pattern, interact with an interface, understand transaction failures, preserve witness inputs, and avoid leaking metadata elsewhere.

Those are product and operational problems.

If the design treats them as outside the system, users will still experience them as system failures.

The Edge Case Is Usually Not Rare

Engineers like to call these failures edge cases.

The phrase is misleading.

The edge is where the user spends most of their time. They do not live inside the circuit. They live inside browsers, wallets, mobile notes apps, RPC errors, failed transactions, support messages, screenshots, local backups, and half-remembered instructions.

That means the “edge case” is often the normal case under stress.

A user copies only part of a note. A browser clears local storage. A relayer returns a vague error. The user changes devices. The interface disappears. A proof fails, but the error does not say whether the note, chain, denomination, root, or relayer path is the issue.

None of this breaks the circuit.

It breaks the user’s path through the system.

Notes, Witnesses, And Forgotten State

Many privacy workflows rely on user-held state.

That state may be called a note, secret, witness input, local key, or recovery data depending on the system. The exact name is less important than the responsibility it creates.

If the system requires the user to preserve a value, then forgetting that value is not just a user mistake. It is part of the risk model.

Engineers sometimes describe this too casually: “The user should back it up.”

That statement may be technically correct, but it does not solve the design problem.

Users lose files. They copy partial strings. They store values in unsafe places. They confuse networks. They forget which deposit or commitment a value belongs to. They move between devices. They assume a browser, wallet, or app will remember more than it actually does.

The cryptographic system may have no way to recreate missing state.

That is an edge failure.

The proof did not break. The user’s operational path did.

Interfaces Leak Mental Models

Interfaces do not only display state. They teach users how to think about the system.

If an interface hides complexity too aggressively, the user may not understand what must be preserved. If it exposes too much raw data, the user may not know what matters. If it uses vague error messages, the user may interpret a temporary failure as permanent loss or a permanent limitation as a temporary bug.

Privacy interfaces have an especially hard job because they must explain enough without encouraging unsafe behavior.

For example, an interface might need to communicate:

- what data the user must keep,

- what data should never be shared,

- what a failed transaction does or does not mean,

- whether a proof was generated,

- whether a nullifier has already been used,

- whether the current Merkle root is accepted,

- whether a relayer failed or the underlying action failed,

- whether the chain state has changed since the user generated inputs.

Each unclear message becomes a point where users invent their own interpretation.

That is usually where mistakes begin.

Metadata Lives Outside The Circuit

A privacy proof can hide a relationship inside the protocol while the user reveals information outside it.

This is one of the most common misunderstandings about privacy systems.

The circuit may hide the link between a commitment and a later action. But users can still leak information through timing, IP address, wallet funding paths, browser behavior, RPC providers, reused addresses, gas patterns, exchange deposits, social posts, screenshots, or support messages.

The proof system solves a defined problem. It does not automatically solve every metadata problem around the user.

This does not make the proof useless.

It means privacy is a system property, not only a circuit property.

The edges matter because users live at the edges.

Relayers And Failure Interpretation

Relayers are often treated as infrastructure details, but from the user’s perspective they are part of the privacy workflow.

When a relayer fails, the user may not know what failed.

Was the proof invalid? Was the transaction rejected? Was the fee too low? Was the destination wrong? Was the relayer unavailable? Did the chain revert? Did the action partially complete? Does the user need to regenerate something?

The answer may be obvious to an engineer reading logs.

It is not obvious to a user looking at a failed interface state.

This is another edge failure: the system may preserve its cryptographic guarantees while failing to communicate operational status.

Poor failure messages are dangerous because they create unnecessary retries, unsafe sharing of data, or trust in unofficial helpers who sound more certain than the interface.

A Better Error Message Is Part Of The Protocol Experience

I do not mean that every privacy system needs to reveal sensitive internal details. That would create its own risks.

But error design matters.

There is a large difference between:

Transaction failed.and:

The proof was not submitted. Check whether the selected network, note format, denomination, and recent Merkle root match before trying again.The second message still avoids operational overreach, but it gives the user a safer next step. It reduces panic. It reduces unnecessary retries. It reduces the chance that the user will paste sensitive material into an unofficial chat because the interface gave them nothing useful.

Documentation and error states are not cosmetic.

They are security surfaces.

Documentation Is Security Infrastructure

Privacy documentation is often treated as an educational accessory.

It should be treated as security infrastructure.

Good documentation tells users what the system can do, what it cannot do, what data must be preserved, what data must not be shared, how to interpret common errors, and when not to proceed.

The most important documentation is not always the most advanced.

Sometimes the highest-value documentation is simple:

- save this value securely,

- do not share this value,

- this value cannot be recreated if lost,

- a failed relayer does not necessarily mean the proof is invalid,

- check chain and denomination before assuming loss,

- do not rely on screenshots as your only backup,

- do not follow instructions that require secrets.

These statements may seem basic. They prevent real failures.

Privacy Systems Need Operational Design

I like proof systems because they force precision.

The challenge is carrying that precision into the rest of the product.

If a circuit has clear constraints but the user workflow has vague assumptions, the system is only precise in the part most users never see.

Operational design means asking uncomfortable questions:

- What happens when the user loses local state?

- What happens when a relayer fails?

- What happens when the UI disappears?

- What happens when the user changes devices?

- What happens when support needs evidence without sensitive data?

- What happens when the user misunderstands a warning?

- What happens when the proof is valid but the surrounding metadata is not private?

These are not secondary details. They are part of the actual security model experienced by users.

The Edge Is Where Trust Is Won Or Lost

Users do not judge privacy systems by circuits alone.

They judge them by whether they can understand what happened when something goes wrong. They judge them by whether the system helps them preserve the data they need. They judge them by whether failure states are explainable. They judge them by whether documentation protects them from unsafe shortcuts.

The circuit can be elegant and the product can still be brittle.

The proof can be sound and the workflow can still fail.

That is why privacy engineering should not stop at cryptographic correctness.

It has to include the messy edges: storage, interfaces, relayers, metadata, user decisions, and recovery boundaries.

Privacy systems fail at the edges because that is where humans touch them.

And if humans are part of the system, the edge is not outside the design.

It is the design.