Why I Recommend RAG Over Fine-Tuning for Credit Analysis

Siddharth B6 min read·1 hour ago

Siddharth B6 min read·1 hour ago--

ADR Series — Article 1 of 5

This is the first in a five-part series on Architecture Decision Records — the most underrated tool in a senior engineer’s arsenal. Each article covers one real decision, the reasoning behind it, and why writing it down changes everything.

At some point in almost every AI project in financial services, someone says it.

“Should we just fine-tune the model?”

Honestly — it is a reasonable question. Fine-tuning sounds powerful. You take a general-purpose model, feed it your domain data, and it becomes yours. Smarter about your terminology. Faster on your specific tasks. Better, in theory, at understanding what a credit memo actually means versus what a quarterly earnings report means.

The problem is that “better” is doing a lot of work in that sentence. Better at what, exactly?

I have been deploying AI systems to financial institutions for a while now. And the fine-tuning conversation comes up every single time. Every time, the reasoning for not doing it is the same. And every time, without a written decision record, that reasoning has to be reconstructed from scratch when a new engineer joins, a vendor pitches, or a stakeholder asks.

This article is that reasoning — written down.

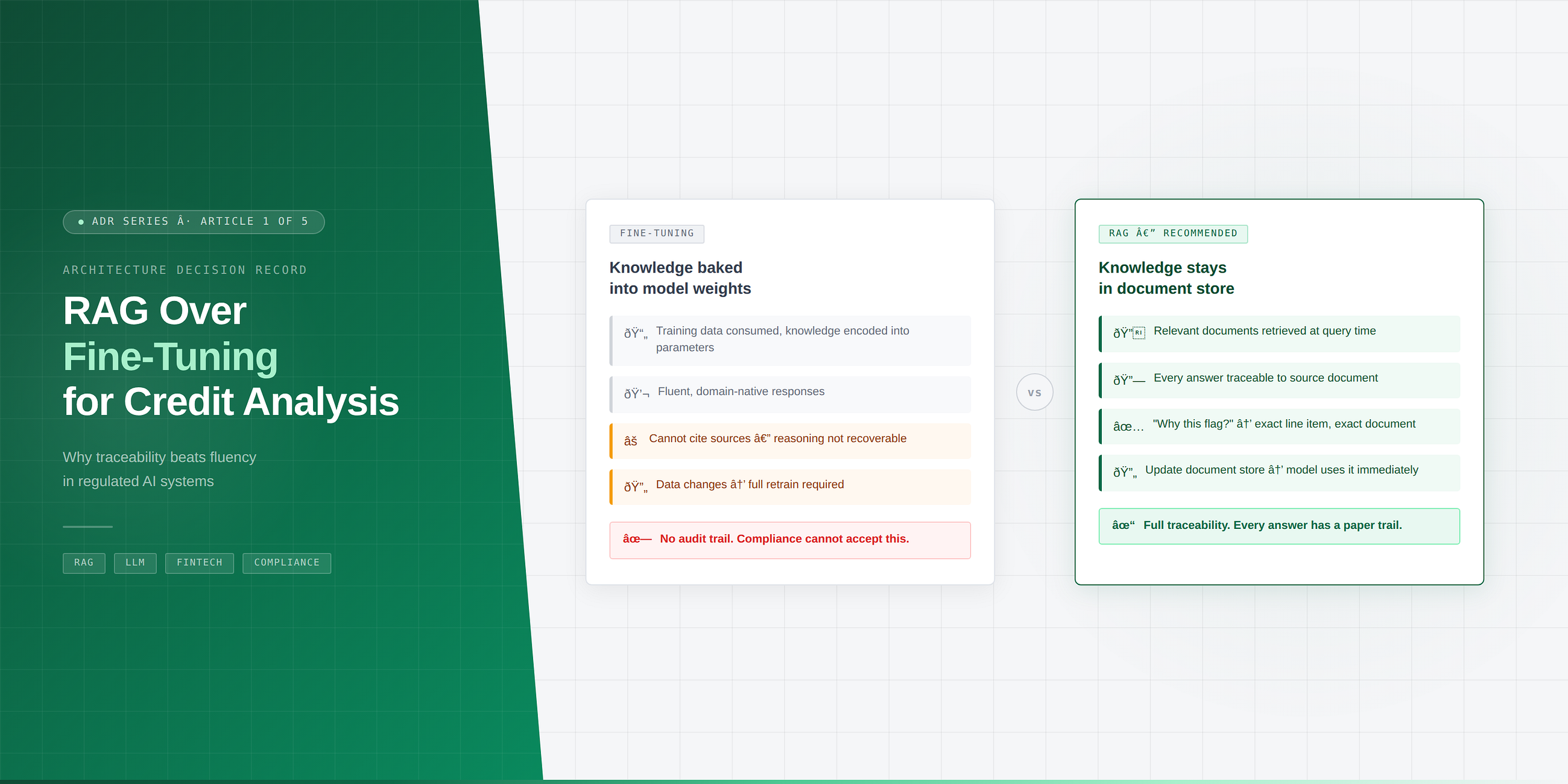

For credit analysis — reading financial statements, extracting risk signals, summarising borrower profiles — I recommend Retrieval-Augmented Generation (RAG) over fine-tuning.

Not because fine-tuning is bad. But because in a regulated environment, fine-tuning optimises for the wrong thing.

What Fine-Tuning Actually Gets You

Fine-tuning makes a model behave differently. You train it on your domain data and it starts producing outputs that look more like your world. Responses feel native. Terminology feels right. A fine-tuned credit model will naturally use terms like debt-service coverage ratio, leverage multiple, and working capital cycle in ways that a general-purpose model sometimes fumbles.

That fluency is real and it is valuable.

But here is the thing nobody tells you upfront — a fine-tuned model cannot show its work.

Ask it why it assessed a borrower as high-risk and it will give you an answer. A confident, fluent, well-structured answer. What it cannot give you is a source. There is no document it can point to. No line item from the financial statement. No specific ratio that triggered the conclusion. The reasoning is baked into the weights somewhere, distributed across billions of parameters, and it is simply not recoverable in any auditable way.

In most industries, that is inconvenient.

In financial services, that is a compliance problem.

What RAG Gets You Instead

RAG keeps the knowledge outside the model. When a query comes in, the system retrieves the relevant documents first — the actual financial statements, the actual credit policies, the actual borrower data — and hands them to the model along with the question. The model’s job is reasoning and synthesis, not memorisation.

What this means in practice:

Every answer has a source. Every conclusion traces back to a specific document, a specific passage, a specific number. When a credit analyst asks “why did the system flag this borrower?” — you can show them. Not explain in vague terms. Point to the exact line.

That is not just good engineering. In a regulated environment, that is the difference between a system that gets approved and a system that gets shelved.

The Freshness Problem Nobody Mentions

There is a second problem with fine-tuning that gets far less attention than it deserves.

Credit data changes. Constantly. New financial statements every quarter. Updated borrower profiles. Revised risk policies. Macroeconomic conditions that shift what a “healthy” debt ratio even looks like — a perfectly reasonable leverage multiple in a low-rate environment looks very different when rates move.

A fine-tuned model is a snapshot. To update it, you retrain. Retraining takes time, compute, and validation cycles — especially in a regulated context where you cannot just push a new model version and hope for the best.

RAG does not have this problem. You update the document store. The model works with new information the next time it retrieves. No retraining. No validation cycle. No lag between the world changing and your model knowing about it.

The Tradeoff You Are Actually Making

I want to be honest about this part, because an ADR is supposed to capture tradeoffs — not just justifications.

Fine-tuning can produce more fluent, domain-native responses. For some use cases — internal tooling, first-draft generation, lower-stakes summarisation where explainability is not the primary concern — that fluency advantage is real and meaningful.

If your use case does not carry a compliance or audit obligation, fine-tuning may be the right call.

But for credit analysis specifically, where every output may be reviewed by a regulator, questioned by an auditor, or used to make a decision that affects a real borrower — fluency is not the priority. Traceability is.

RAG wins on traceability. It is not close.

Applicability

This reasoning applies beyond credit analysis. Ask yourself three questions about your use case:

1. Does someone need to explain the output? If a decision, recommendation, or classification produced by the AI may need to be justified to a regulator, auditor, manager, or customer — you need traceability. RAG gives you that. Fine-tuning does not.

2. Does the underlying data change frequently? If the knowledge your model needs to reason over updates on a weekly, monthly, or quarterly cycle — the retraining overhead of fine-tuning becomes an operational liability. RAG adapts as fast as you update the document store.

3. Is domain fluency more important than source transparency? If your use case is primarily generative — drafting, summarising, translating — and the output does not carry accountability obligations, fine-tuning’s fluency advantage may genuinely matter more than traceability. That is a legitimate choice. Just write it down.

The pattern holds outside financial services too. Healthcare AI where diagnostic reasoning needs to be explainable. Legal tech where every conclusion should cite a statute or precedent. Any domain where “the model said so” is not a sufficient answer.

I did not choose RAG because fine-tuning is bad technology. Fine-tuning is genuinely powerful and has the right use cases.

I chose RAG because in a compliance-heavy domain, an answer you cannot explain is not actually an answer. The model’s fluency does not matter if the output cannot be traced, sourced, and defended when someone with a clipboard asks you to justify it.

What made this worth writing down is not the decision itself. It is what happens without the record.

Without it, the fine-tuning conversation restarts every time a new engineer joins and sees the retrieval pipeline. Every time a vendor pitches their fine-tuned domain model. Every time a benchmark comparison shows fine-tuning scoring higher on raw accuracy and someone wonders if we made the wrong call.

The ADR does not shut those conversations down. It makes sure they start from the right place.

Next in this series: ADR #2 — Why We Recommend a Multi-LLM Architecture Over a Single Provider. The decision compliance will force anyway — better to make it deliberately.

If this was useful, follow for more. I write about software architecture, AI deployment in regulated industries.