The data has been clear for a while. Most people just aren’t paying attention to the right numbers.

There’s something happening underneath all the AI hype that most think pieces miss entirely.

While the industry debates which model benchmark matters and whether AI will replace developers, the engineers actually building the systems that run these models at scale have quietly made a decision. Not Python — that’s for training and research. Not Node.js — that ship has already sailed. They’re writing Go. And they’ve been doing it long enough that the traffic data is now impossible to argue with.

Lets Start With the Cloudflare Data

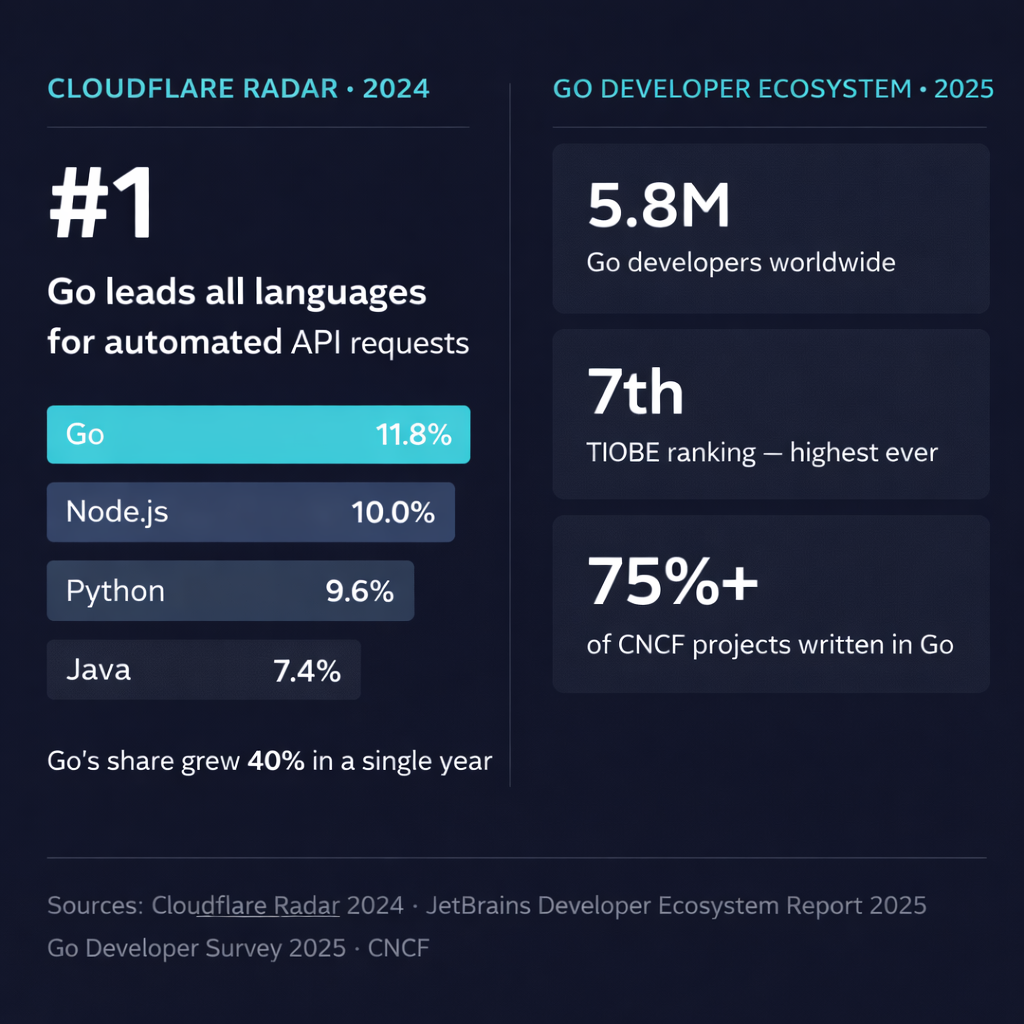

Every year, Cloudflare publishes its Year in Review — not a survey, not a poll, but actual traffic analysis from one of the largest networks on the internet. More than half of all Cloudflare traffic is API-related. In their 2024 edition, they measured which languages power the automated clients making those requests.

Go came first. 11.8% of automated API requests. Node.js was second at 10%, Python third at 9.6%, Java fourth at 7.4%.

Go’s share grew by about 40% in a single year. Node.js fell by about 30%.

This is the number worth paying attention to. Not because it means Go “won” — it hasn’t, and that’s not how languages work — but because automated API requests are the most honest signal we have. These are systems running 24/7 without a human in the loop. Engineers chose Go for them because Go earned it in production, not because a blog post told them to.

The Survey Numbers Tell the Same Story

Cloudflare’s traffic data gets more interesting when you stack it next to what developers are actually reporting.

According to JetBrains’ State of Developer Ecosystem Report 2025, Go reached its highest TIOBE ranking ever this year — 7th place, up from 13th in January 2024. Six spots in twelve months.

The 2025 Go Developer Survey, run directly by Google’s Go team with 5,379 respondents, showed that over a third of Go developers are now building cloud infrastructure tooling. 11% are working with ML models, tools, or agents — a category that barely registered in the survey two years ago.

CNCF puts the total Go developer population at around 5.8 million. Stack Overflow’s survey shows Go preferred by 13.5% of developers globally, rising to 14.4% among professionals. These are full-time engineers at companies, not weekend hobbyists.

And Go developers are among the highest-paid in the industry — senior roles in the US regularly exceed $200K. That’s not a cultural fact. It’s a supply-demand fact. There aren’t enough Go engineers for how much companies want to hire them.

The Part Everyone Gets Wrong About Go and AI

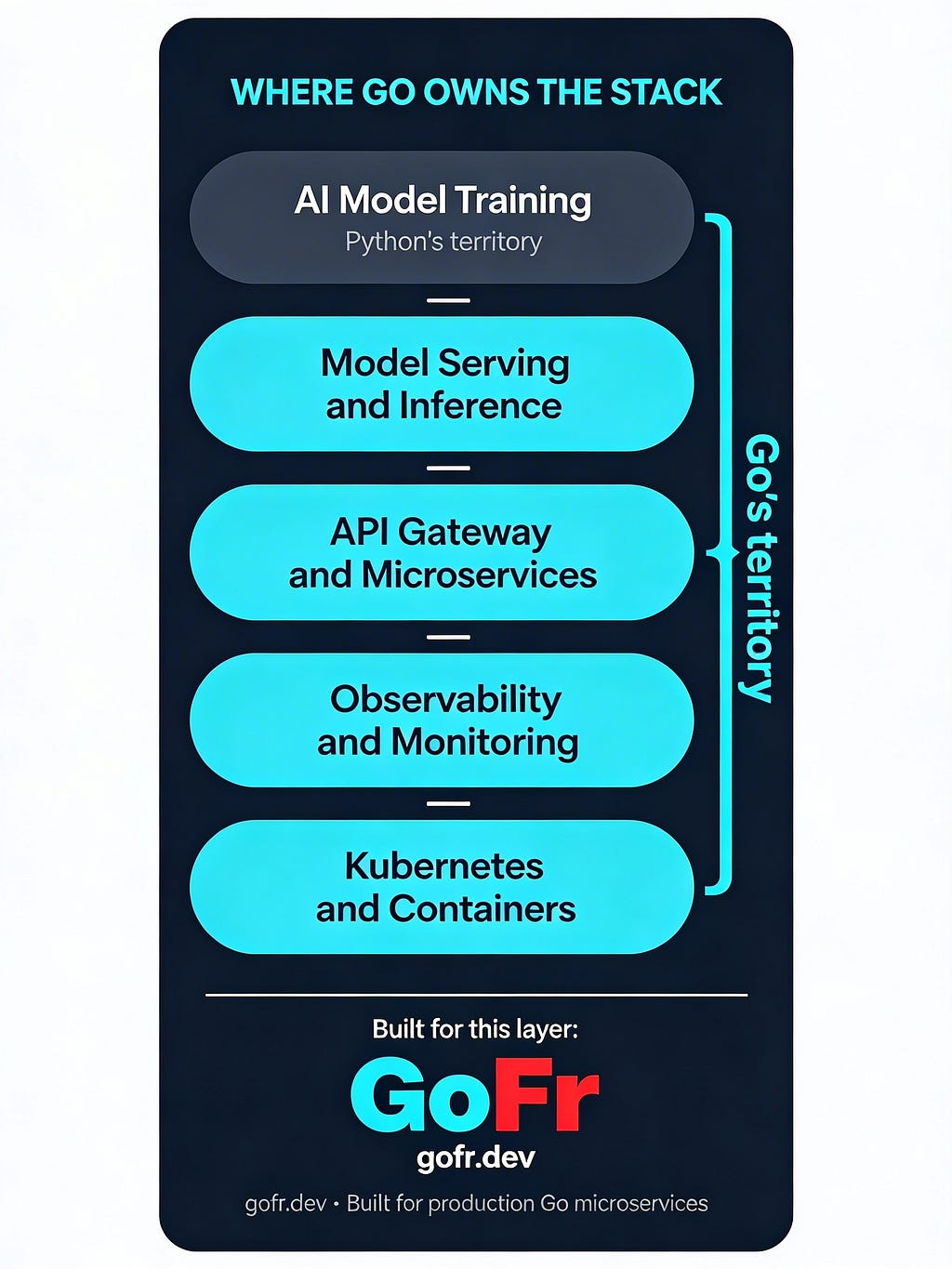

Here’s the framing that keeps tripping people up: Go is not competing with Python for machine learning. That battle was over before it started — Python’s ML ecosystem is too deep, too established, too entrenched in how researchers think and work.

But training a model is not the same as running one.

A model in a Jupyter notebook produces no value. Value comes from inference — millions of requests per day, integrating with databases, routing between services, handling failures gracefully, and doing all of this without your cloud bill going vertical. That layer, between the trained model and the user, is production infrastructure. And production infrastructure is Go’s home territory.

The real-world numbers bear this out:

Uber migrated their geofencing service from Node.js to Go and cut CPU usage by 50%. Later, working directly with Google to implement Profile-Guided Optimization (PGO) in Go, they saved 24,000 CPU cores across their highest-traffic services. That’s not a performance win. That’s a budget line item.

Cloudflare applied PGO to their Go services and shed approximately 3.5% CPU usage, roughly 97 cores across their infrastructure. At their scale — trillions of requests monthly — small percentages are big money.

Twitch runs over 2 million concurrent live streams and 10+ billion chat messages daily through Go-powered systems. Go runtime improvements gave them a 20x reduction in garbage collection pause times and a 30% drop in CPU utilization.

Capital One switched from Java to Go for credit offer APIs. The result was 70% lower latency, 90% cost savings on infrastructure, and 30% faster delivery speed for their engineering team.

None of these companies switched because they read a language ranking. They switched because the performance difference showed up in their production metrics and their AWS invoices.

Go Was Already Running Your Infrastructure

There’s something worth sitting with here.

Kubernetes is written in Go. Docker is written in Go. Terraform is written in Go. Prometheus is written in Go. Over 75% of Cloud Native Computing Foundation projects are written in Go.

If your AI product runs on Kubernetes — and most do — you’re already in a world built on Go. The container orchestration layer, the monitoring layer, the infrastructure-as-code layer: all Go. When you write your application in Go, you’re not adopting a new ecosystem. You’re aligning with the one that’s been running underneath your stack this whole time.

This is different from most language adoption stories, where you’re moving toward something new. With Go and AI infrastructure, you’re mostly catching up to something already there.

The Boilerplate Problem

Go has an interesting cultural habit: developers are genuinely proud of using the standard library directly. Ask a Go developer which web framework to use and a common answer is “just net/http, you don't need a framework."

For a simple API, that’s a fine answer. For a production microservice that needs observability, database connection management, health checks, pub/sub messaging, distributed tracing, and Kubernetes readiness probes — the standard library gets you about halfway there, and the second half is a week of wiring before you’ve written any actual business logic.

This is a real problem. Teams solve it by copying infrastructure code from their last project, or spending time on a problem that has already been solved many times.

GoFr is a Go framework that starts from this problem and takes it seriously.

What GoFr Actually Does

GoFr is opinionated, which is worth unpacking. Opinionated frameworks make choices for you. That sounds constraining, and for some things it is — if you need to do something unusual, you’ll feel the edges. But the things GoFr constrains are mostly the things you’d spend a week deciding on anyway, and your answer would probably land close to theirs.

In practice, opinionated means your team writes consistent code across services. A new engineer who joins in month three can read any service and understand it. You stop relitigating the same infrastructure decisions.

Here’s what ships with it:

Observability by default. Structured logging, Prometheus-compatible metrics, and OpenTelemetry distributed tracing are there from the first line of code — no configuration, no wiring. One developer put it plainly: “GoFr has helped me monitor my applications so easily for which I would have written more than 100 lines of code.” Getting to that in two weeks of setup across three libraries is the normal experience everywhere else.

- Kubernetes-native health checks. GoFr exposes liveness and readiness endpoints that satisfy Kubernetes probe requirements out of the box. You don’t configure them. They’re there.

- Database support that isn’t an afterthought. MySQL, PostgreSQL, Redis, Kafka, MongoDB, MQTT, Google Cloud services — with connection lifecycle management, migrations, and health checks built into each integration. GoFr is also listed in the CNCF Landscape, which matters if your organization uses that as a filter for tool adoption.

- Interservice communication. Circuit breakers, OAuth support, default headers, service registration — the distributed systems plumbing that every microservice architecture eventually needs and every team writes from scratch at least once.

The Hello World is worth showing, because it’s deceptive:

package main

import "gofr.dev/pkg/gofr"

func main() {

app := gofr.New()

app.GET("/greet", func(ctx *gofr.Context) (any, error) {

return "Hello World!", nil

})

app.Run() // listens on localhost:8000

}

That’s eight lines. That service already has structured logging, distributed tracing, health check endpoints, and metrics exposure. What you write next is your business logic — not more glue.

On Performance

The obvious worry with a batteries-included framework is that you’re paying a speed tax for the convenience.

In benchmarks against Echo — one of Go’s most performance-focused frameworks, essentially a case study in minimalism — GoFr matches or exceeds it on throughput and response times, even with observability features turned on. The framework was built by people running production workloads at scale, not by people optimizing benchmark scores, and that tends to show.

The honest framing though: if GoFr were 10% slower in raw benchmarks but saved your team a week of infrastructure setup per service, the right calculation is obvious. That it doesn’t appear to require that tradeoff is the better news.

Where This Is Heading

Go is not having a moment. It’s having a decade.

The Cloudflare data, the TIOBE climb, the CNCF project share, the Go team formally naming AI model serving as a strategic focus for the language — these aren’t coincidences. They’re the same story told from different angles. Engineers building systems at scale, under real constraints, are choosing Go. The AI infrastructure boom is, in many ways, a Go boom.

The companies defining what AI in production looks like in 2026 are writing Go services right now. Some of them are writing every piece of infrastructure from scratch. Others are using GoFr and shipping the actual product.

The boilerplate problem is real. The infrastructure layer is already Go. The question is just how much time you want to spend reinventing it.

GoFr is at gofr.dev. The source is at github.com/gofr-dev/gofr.

Why Go Is Becoming the Default Language for AI Infrastructure was originally published in Level Up Coding on Medium, where people are continuing the conversation by highlighting and responding to this story.