What if the next great disruption isn’t economic, but cultural? I begin by questioning an idea that seemed too solid to be contested: the very notion of providing services. If I have a robotic assistant capable of executing almost anything with efficiency, consistency, and low cost, does it still make sense to hire third-party services?

This question is not futuristic. It is already silently operating within companies, in creative agencies, legal departments, marketing, software development, and even in activities that required years of tacit learning. The central point is not the technology itself, but what happens when the demand for certain services drops too fast.

The paradox of total efficiency

Automation has always promised to free up human time. But now it touches a deeper nerve: the disappearance of learning by doing. When a service is no longer contracted, it is no longer practiced. When it is no longer practiced, it is no longer taught. And when it is no longer taught, an entire chain of knowledge transmission is lost.

The risk is not just temporary unemployment. It is the progressive erosion of cognitive and craft skills that took decades — sometimes centuries — to consolidate. Strategic copywriting, complex negotiation, systems design, legal thinking, critical analysis, creative engineering: much of this is not in the manuals. It is in the coexistence, in the repetition, in the guided trial and error by someone more experienced.

The Invisible Side Effect of Reduced Demand

Reducing the demand for human services drastically can generate a rarely discussed side effect: the collapse of the training ecosystem.

- Less demand → fewer active professionals

- Fewer professionals → fewer mentors

- Fewer mentors → less knowledge transmission

- Less knowledge → total dependence on automated systems

The result is a vicious cycle. In the short term, efficiency increases. In the medium term, the organization loses repertoire. In the long term, it loses autonomy. Companies that outsource everything to automated systems today may discover, tomorrow, that they no longer have people capable of questioning, auditing, adapting, or reinventing those same systems.

The Anatomy of the Void: The Silent Degradation of Governance

The loss of skills does not manifest as a sudden rupture, but as a journey of entropic degradation. It begins in the state of Active Expertise, where the human has total mastery of the variables and uses technology as a mere extension of their will. As we slide into the Delegation phase, the comfort generated by AI’s efficiency begins to atrophy critical thinking; we stop questioning the “how” because the “what” satisfies us. It is the seduction of the quick answer that silences methodological curiosity.

The point of no return is the Erosion of Practice, where knowledge still resides in intellectual memory, but no longer in the hands or instinct. Without “learning by doing”, the company enters a state of Institutional Amnesia: mentors retire and leave no worthy successors, as there was no fertile ground for guided error. The final stage is absolute dependence on the Opaque Box: opaque systems that no one inside the organization knows how to audit, fix, or, if necessary, reinvent from scratch. From this perspective, the “Audit Void” is not just a technical compliance failure; it is a surrender of sovereignty over vital processes, leaving the organization vulnerable to disruptions it won’t even have the repertoire to understand.

Human skills are not just outputs, they are cultural infrastructures

There is a fundamental difference between executing a task and understanding a craft. Crafts carry contextual judgment, ethical sensitivity, reading of nuances, and the ability to improvise under pressure.

These elements are not preserved merely through documentation or trained models. They survive over time through living practices, active communities, and rituals of intergenerational learning. When we think only in terms of cost and productivity, we ignore that human skills function as an invisible infrastructure. You only realize they are gone when you need them and they no longer exist.

Mapping the Terrain: The Craft Preservation and Sovereignty Matrix

Avoiding the generational void requires a tactical discernment that goes beyond the simplistic metric of quarterly cost reduction. It is necessary to map the organizational territory using a Craft Preservation Matrix. Not every task requires the rigor of total human mastery; the fatal strategic error is automating what is vital to the company’s identity and competitive advantage. Tasks involving Ethical Judgment, High-Complexity Negotiation, and Reading Political Nuances are assets of sovereignty; if fully delegated to algorithms, the company loses its technical soul and its capacity to differentiate itself in the market.

The true leadership challenge lies in managing the so-called “Resilience Zone”. These are the activities that AI executes with seductive efficiency, but which the human technical body must continue to practice as a form of “cognitive backup” and readiness maintenance. It is the deliberate maintenance of a redundancy: we keep humans practicing certain tasks not out of bureaucratic inefficiency, but to ensure that, should the digital system fail or the global context change drastically, the original intelligence and critical “know-how” are still there to take back the helm. Managing this matrix is an act of executive courage: it is deciding where we will use “Pure Automation” to gain scale and where we will retain “Critical Craft” to protect the intellectual capital that truly guarantees the organization’s long-term survival.

Thinking about AI requires thinking in intergenerational time

Perhaps the most important question is not “what can AI do now?”, but what it prevents us from learning to do in the future. Responsible strategy in AI is not about halting adoption, but orchestrating the pace. It is about creating space for humans to continue practicing, teaching, and evolving essential skills, even when a machine can do it faster.

This implies conscious decisions:

- keeping humans in the loop not out of nostalgia, but for resilience.

- using AI as a learning amplifier, not as a total substitute.

- investing in continuous training, even when it doesn’t seem “efficient”.

Organizations that think only about the quarter run the risk of creating an irreversible generational void.

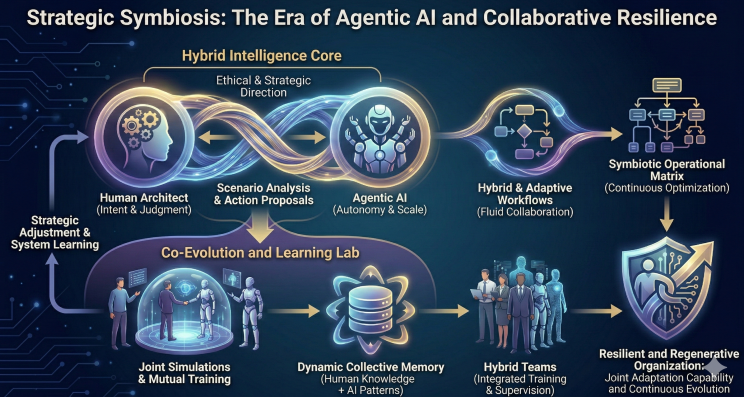

Strategic Symbiosis: Agentic Orchestration and the HITL 2.0 Model

Technological evolution has brought us to the era of Agentic AI, which permanently invalidates the view of AI as a passive “copy and paste” tool. We are now entering the era of Cognitive Symphony, where the relationship shifts from unilateral command-and-control to a dynamic symbiosis of competencies. In this advanced Human-in-the-Loop (HITL) 2.0 model, the human takes on the noble role of Architect of Intention and Judgment, defining ethical guidelines and strategic mission limits, while AI operates with large-scale executive autonomy to optimize workflows.

The fundamental piece of this architecture is not the software, but the “Co-Evolution Laboratory”. Instead of using AI to isolate junior professionals from real practice, we use the outputs — and especially the machine’s hallucinations, biases, and failures — as an educational Sparring Arena. The senior mentor uses the AI’s error as a real-time case study, challenging the junior to identify the contextual flaw or cultural nuance the algorithm failed to perceive. Thus, AI ceases to be the substitute that erases knowledge and becomes the catalyst that accelerates seniority, transforming daily workflow into an ecosystem of continuous learning, where humans and machines evolve together without sacrificing a deep understanding of the business.

The true strategic asset is not automation, it is continuity

Ultimately, the question isn’t whether we will hire fewer services. That is already happening. The question is what we decide to preserve while it happens. Losing essential human skills is not just a social problem. It is a strategic risk. In a volatile world, systems break, models fail, and contexts change. Those who lack people capable of thinking, rebuilding, and teaching anew will remain trapped by what they no longer understand. Technology advances in rapid cycles. Human wisdom does not. And that is exactly why it must be protected.

Towards Resilience: The Roadmap to Maturity and Legacy

The transition from an “Efficient and Fragile” company to a “Wise and Resilient” organization is not an IT project with a delivery date, but a journey of deep cultural maturity. This path begins at Initial Maturity, where the focus is merely the tactical automation of logical noise, but it must quickly evolve to Structured Maturity, with the rigorous mapping of craft skills that cannot be lost. It is time to create “Craft Centers” where the transmission of knowledge is treated as a governance priority, not an HR operational luxury.

The pinnacle of this journey is Sovereign Maturity, where the company achieves the capacity for continuous regeneration. Here, human knowledge and AI patterns are integrated in such a way that the organization’s legacy does not depend on isolated individuals or external technology providers, but on a Dynamic Collective Memory. Reaching this stage means the company has not only survived the wave of automation but has used it to crystallize and scale its internal wisdom. It is the ultimate guarantee that the sense of urgency, ethical sensitivity, and capacity for improvisation under pressure will remain alive and vibrant, regardless of the speed or direction of the next technological revolution.

Final Reflection: What Survives When Execution Disappears

The progressive replacement of human service provision by robotic assistants does not happen as an abrupt rupture, but as a silent erosion. First, we outsource less. Then, we train less. Finally, we no longer know how to evaluate the quality of what is being done. The problem is not losing human efficiency, but losing the ability to discern when efficiency no longer serves its purpose.

There is a recurring error in treating skills merely as productive units. Skills are living structures that depend on continuous practice, context, and social transmission. When demand disappears, it isn’t just the service that vanishes — the environment where knowledge was refined, questioned, and passed on also disappears. Without this environment, there is no way to “reactivate” a competence in the future simply by turning on a system or hiring a supplier.

Another neglected point is the psychological and cognitive effect of total delegation. When we stop executing certain tasks, we also stop formulating relevant questions about them. The mind adapts to the level of control it possesses. Less control generates less curiosity, less critical thinking, and a lower capacity to intervene when something goes wrong. Dependence is not born from technology, but from the progressive abdication of understanding.

Thinking in terms of intergenerational time completely changes the logic of the decision. A choice that seems rational today — automating everything possible — can generate a desert of competencies in one or two decades. The next generation inherits functional, yet opaque systems, without masters, without references, and without the repertoire to evolve them consciously.

Finally, the central strategic question is not whether AI will replace services, but which human capabilities need to continue being exercised even when they are no longer economically “necessary”. Preserving these capabilities is not a romantic or conservative act. It is a measure of organizational and civilizational resilience, in a world where the unexpected is not the exception, but the rule.

“Once we have surrendered our senses and nervous systems to the private manipulation of those who would try to benefit from taking a lease on our eyes and ears and nerves, we don’t really have any rights left.”- Marshall McLuhan (1911–1980)

Non-Obvious Insights

- The loss of skills begins before unemployment: it starts the moment we stop learning by doing, even if people are still formally employed.

- Excessive automation reduces human auditability, creating organizations that operate systems they can no longer explain or fix.

- Unused knowledge does not remain on standby: it degrades quickly when there is no social and practical context to sustain it.

- Maximum efficiency in the present can generate extreme fragility in the future, especially in scenarios of technological or regulatory disruption.

- Keeping humans in the loop is not redundancy; it is a form of cognitive and cultural backup.

Key Points

The discussion around AI and services should not be framed solely through cost, scale, or productivity. It demands a broader perspective — one that accounts for knowledge continuity, strategic autonomy, and intergenerational responsibility. To decide what to automate is, simultaneously, to decide what we will stop teaching.

- The decline in demand for human services directly impacts the transmission of knowledge across generations.

- Human skills operate as invisible infrastructure, becoming critical in moments of crisis.

- Automation without preserved learning creates dependency and erodes autonomy.

- An effective AI strategy must consider long cycles, not just short-term gains.

- Preserving crafts and professions is a strategic decision, not merely a cultural one.

Ultimately, true competitive advantage will not belong to those who automate the fastest, but to those who can balance automation with human continuity. The future will favor organizations that recognize some skills must remain alive — even when machines already know how to perform them.

If you lead a company, a team, or an AI strategy, it is worth asking: which human skills must continue to exist when this generation is no longer here to teach them?

The answer may determine who retains autonomy in the future — and who becomes fully dependent on systems they no longer know how to question.

👉 If this perspective resonates with you, let’s connect. Thinking about AI strategy today is, above all, thinking about legacy.

About Renato Azevedo Sant Anna

Architect in Digital Innovation and AI Products, author of Forjando Carreiras de IA, speaker and strategic consultant for retail, technology and SaaS companies. My mission is to help your organization thrive in the new digital era through conscious, strategic and human‑centered innovation.

“The future belongs to those who anticipate, adapt and build.” — Renato Azevedo Sant Anna

When a Service Stops Being a Service: AI, Human Craft, and the Risk of the Generational Void was originally published in DataDrivenInvestor on Medium, where people are continuing the conversation by highlighting and responding to this story.