It came from a forty-year-old psychology paper. AI happens to be well-suited to running it.

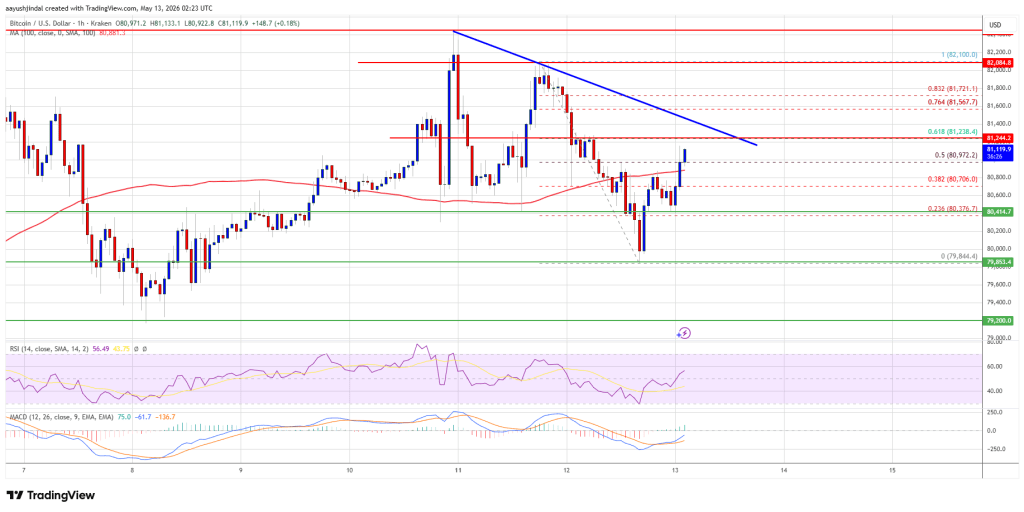

A few months ago I was about to add to a position I’d already been wrong about twice. The thesis still felt clean. The price looked attractive. The chart had what I wanted to see. My finger was on the buy button and something nagged at me — couldn’t articulate what. So I closed the order, opened Claude, and typed one question.

Eleven minutes later, I didn’t make the trade.

The question wasn’t clever. It wasn’t proprietary. The technique behind it traces back to a 1989 paper by Deborah Mitchell, Jay Russo, and Nancy Pennington — three psychologists who found that imagining an event had already happened (rather than just imagining it might) made people roughly 30% better at identifying the actual reasons it could go wrong. Almost twenty years later, Gary Klein formalized this into a project planning technique called the pre-mortem, published in Harvard Business Review in September 2007. Daniel Kahneman picked it up in Thinking, Fast and Slow and has cited it as one of his preferred decision-making tools.

What I noticed is that AI handles this kind of exercise well. On demand. In fifteen minutes. Alone in your kitchen. Which means you no longer need a McKinsey conference room or a team of skeptical colleagues to use what’s a fairly powerful thinking tool. You just need to ask the right question.

What a pre-mortem actually is

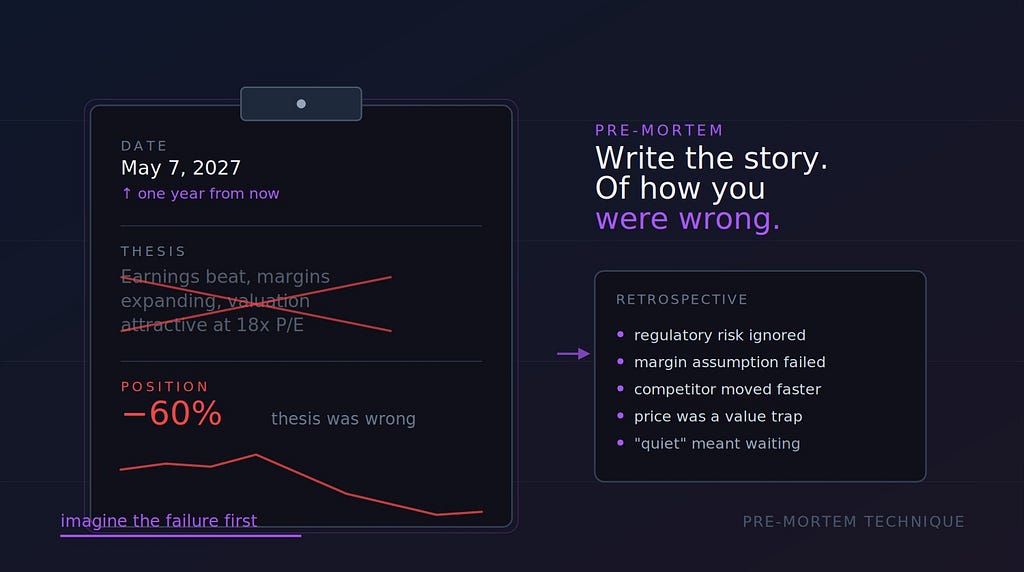

The setup is simple. You imagine it’s a year from now. The decision you’re about to make has gone badly. The position is down 60%. Your thesis was wrong. Now you write the story of how you got there.

That’s the technique.

The reason it works isn’t intuitive at first. A regular “what could go wrong” exercise feels similar but produces worse results. The Mitchell/Russo/Pennington research called the effect prospective hindsight — your brain handles “explain this disaster” differently than “predict possible disasters.” Imagined hindsight, even though it’s made up, unlocks specificity that pure foresight doesn’t.

Kahneman’s contribution was framing this as a debiasing tool. His argument runs roughly like this: the human mind is built for optimism around things we want to be true. When you’ve decided you want to buy NVDA, your reasoning quietly bends to justify that decision — and you don’t notice. A pre-mortem creates structured permission to look for reasons you might be wrong, without the social cost of “negativity” that usually shuts down honest critique inside teams.

For individual investors this matters even more, in a way. Most of us don’t have a partner or team to argue with. The market doesn’t tell you you’re wrong until it’s already taken your money. A pre-mortem gives you something approximating a free, private devil’s advocate.

Why AI fits this exercise

Pre-mortems are awkward to run alone. You can try. You’ll likely do a worse job than you think.

The reason is annoying but obvious once you sit with it: when you imagine your own decision failing, your brain still wants to defend it. You generate weak failure modes that don’t really threaten the thesis. You’ll surface “interest rates spike” or “recession comes” — generic risks any analyst could name — and skate past the specific reasons your particular thesis might be wrong.

A model has none of that loyalty. When you tell it your investment thesis and ask it to write the obituary for that thesis, it has no psychological stake in being polite to your reasoning. It hasn’t held the position for two years. It doesn’t need to feel smart in front of you. It just generates plausible failure stories.

That neutrality is most of the value. Combined with the fact that the model has read enough financial history to know how thesis breakage actually looks in practice, you get something close to a thoughtful friend who happens to know finance well and isn’t worried about hurting your feelings.

There’s a second reason it works that I think is underappreciated. An LLM is well-suited to the cognitive task pre-mortems require — generating plausible narrative chains. “How did this go wrong” is fundamentally a story-completion problem, and stories are what these models are trained on. They’re not predicting prices. They’re not picking stocks. They’re explaining a hypothetical past, which they happen to be good at.

The exact question I ask

Here’s the prompt. No proprietary tricks, no special tuning, just the question I keep landing on:

It is one year from today. I bought [TICKER] at $[PRICE] with a thesis based on [YOUR THESIS IN 2–3 SENTENCES]. The position is now down 60%. The thesis was completely wrong. Write a detailed retrospective explaining what I missed, in the voice of a skeptical analyst who saw this coming. Be specific. Don’t list generic risks. Tell me the actual story of how my reasoning was flawed and what evidence was visible at the time that I ignored or rationalized away.

That’s the whole thing. Five sentences and three variables.

What comes back is rarely what I expected. Sometimes it identifies a real risk I’d been waving away — and I revise the position size or skip the trade. Sometimes the failure modes it generates aren’t convincing, which actually increases my conviction because the model couldn’t find a plausible way I was wrong. Both outcomes are useful.

The trade I almost made and didn’t? The pre-mortem surfaced a regulatory risk I had vaguely registered but was discounting because the news cycle had been quiet for a few months. The model pointed out that “quiet” usually doesn’t mean resolved — it often means waiting. That risk materialized about six weeks later. Stock dropped 35%.

To be clear: the model didn’t predict the drop. It generated one of many ways the thesis could fail, and that one happened to come true. If I’d run pre-mortems on a hundred trades, the specific failure modes the model named would only sometimes match what actually happens. The discipline of generating them — of forcing the question into existence at all — is what changes decision quality. Whether the specific failure shows up later is almost beside the point.

Why this beats the obvious alternatives

You could just ask AI for a bear case. Most people do. The output is usually a tidy three-bullet list — valuation risk, competitive risk, macro risk — and reading it makes you feel diligent without changing anything. It’s too abstract to be threatening.

You could ask AI to argue against your thesis. The output is better, more pointed, but it tends toward debate-club symmetry. The model gives you a balanced counter-argument, you weigh it against your original thesis, you conclude your thesis is still stronger. Of course you do. You wrote it.

The pre-mortem is different because it asks the model to assume failure has already happened. That single framing change forces specificity. The model can’t hedge with “this might not work because of competition” — it has to commit to a story where competition specifically killed the thesis. Stories with stakes have details. Details are where the actual insight tends to live.

There’s a useful side effect too: it lowers your defensiveness as a reader. When the model is “explaining a past that already happened,” you read it the way you’d read a postmortem someone else wrote about a disaster you weren’t responsible for. Your brain doesn’t activate the defensive reflexes that kick in when someone tells you you’re wrong about something you currently believe. You’re just reading a story. Then, slowly, you realize the story is about you.

What it doesn’t do

A pre-mortem won’t tell you whether to buy or not. That’s still your call. The output is a list of plausible failure modes — you have to weigh them against the upside, your time horizon, your conviction level, your portfolio context, and everything else that goes into a real decision.

It also doesn’t replace research. If you don’t understand the company, no pre-mortem can save you. The model fills in plausible-sounding reasoning gaps, but plausibility isn’t truth. I’ve seen pre-mortem outputs that confidently named risks that didn’t actually exist for the specific company in question. Garbage in, plausible garbage out.

And it won’t help with the trades you should make but won’t. Pre-mortems amplify existing doubts; they don’t generate conviction where there isn’t any. The investor who never pulls the trigger will find new reasons not to in every pre-mortem they run. If you’re already prone to analysis paralysis, this technique makes it worse, not better. Worth knowing before you adopt it.

The frame I keep coming back to

Most investors don’t lose money because they failed to predict the future. They lose money because their reasoning was wrong in ways they could’ve spotted before they put capital at risk. The pre-mortem is a forcing function for spotting those flaws, and AI happens to be a useful partner for running it — it has none of the social or psychological friction that prevents humans from being honest with each other.

Klein invented the technique for teams. His original 2007 paper imagined a conference room full of project managers writing failure scenarios on yellow legal pads. What I think is worth noting is that anyone with $20 a month for a subscription can now run the same exercise alone, on any decision they’re about to make. That’s a tool that used to require a McKinsey engagement, available for the cost of a few cups of coffee.

Try it on your next position. Before you click buy, write the prompt. Read what comes back twice. Then decide.

You won’t always change your mind. That’s fine — that’s not the point. The point is to make sure that when you do place the trade, you’ve actually heard the argument against it. Articulated specifically, with detail, in language you can’t easily wave off. Most of us never get that.

This is the use case I keep coming back to. Not prediction, not stock picking, not portfolio optimization. Just: ask the model to tell you the story of how you were wrong. Listen carefully. Decide.

Eleven minutes well spent on most of my trades.

If you’ve landed on a different prompt that works better for you, I’d genuinely like to hear it. The space of “questions worth asking AI before financial decisions” is much larger than this one technique, and there are likely framings I haven’t tried yet.

The One Question I Now Ask AI Before Every Investment Decision was originally published in DataDrivenInvestor on Medium, where people are continuing the conversation by highlighting and responding to this story.