The One Enterprise AI Stack CIOs Are Converging On: Why Operability, Not Intelligence, Is the New Advantage

Why This Conversation Is Accelerating Right Now

Over the last 18–24 months, enterprise AI has crossed a quiet threshold.

AI systems are no longer advisory — they are acting inside real workflows.

As soon as AI can approve, trigger, update, or orchestrate, the core risk shifts.

The CIO’s question is no longer “How smart is the model?”

It becomes “Can we run this safely, repeatedly, and at scale?”

CIOs are converging on one integrated enterprise AI stack because agentic AI must be operated, not just built. The winning stack delivers reusable services-as-software and enforces runtime controls-identity, policy, observability, cost, rollback-plus self-healing operations to scale autonomy safely.

Executive summary

Enterprise AI has crossed a threshold. It’s no longer confined to generating text; it is increasingly taking actions-creating tickets, updating records, triggering workflows, approving requests, and coordinating tools. At that point, the hardest challenge is no longer model capability.

It becomes operability: can the enterprise run autonomy safely, predictably, and economically at scale?

This is why CIOs are converging on one integrated stack-an operating environment that turns AI capabilities into services-as-software (reusable, governed, measurable services) and enables self-healing operations (predict, prevent, recover).

Without this stack, agentic initiatives tend to stall under escalating costs, unclear value, and inadequate risk controls-exactly the failure pattern analysts are now warning about. ( Gartner)

The agent that was “correct” and still caused an incident

An enterprise launches a “helpful” operations agent. It summarizes incidents, drafts remediation steps, and suggests changes. The pilot goes well-until someone enables a feature that lets it execute actions directly.

One afternoon it:

- updates a configuration it believes is safe,

- triggers a downstream workflow,

- escalates privileges because a tool connector was misconfigured, and

- creates a chain of changes no one can fully reconstruct.

The root cause is not model intelligence. The model did what it was asked to do.

The root cause is simpler-and more uncomfortable:

the enterprise didn’t have a production operating environment for autonomy.

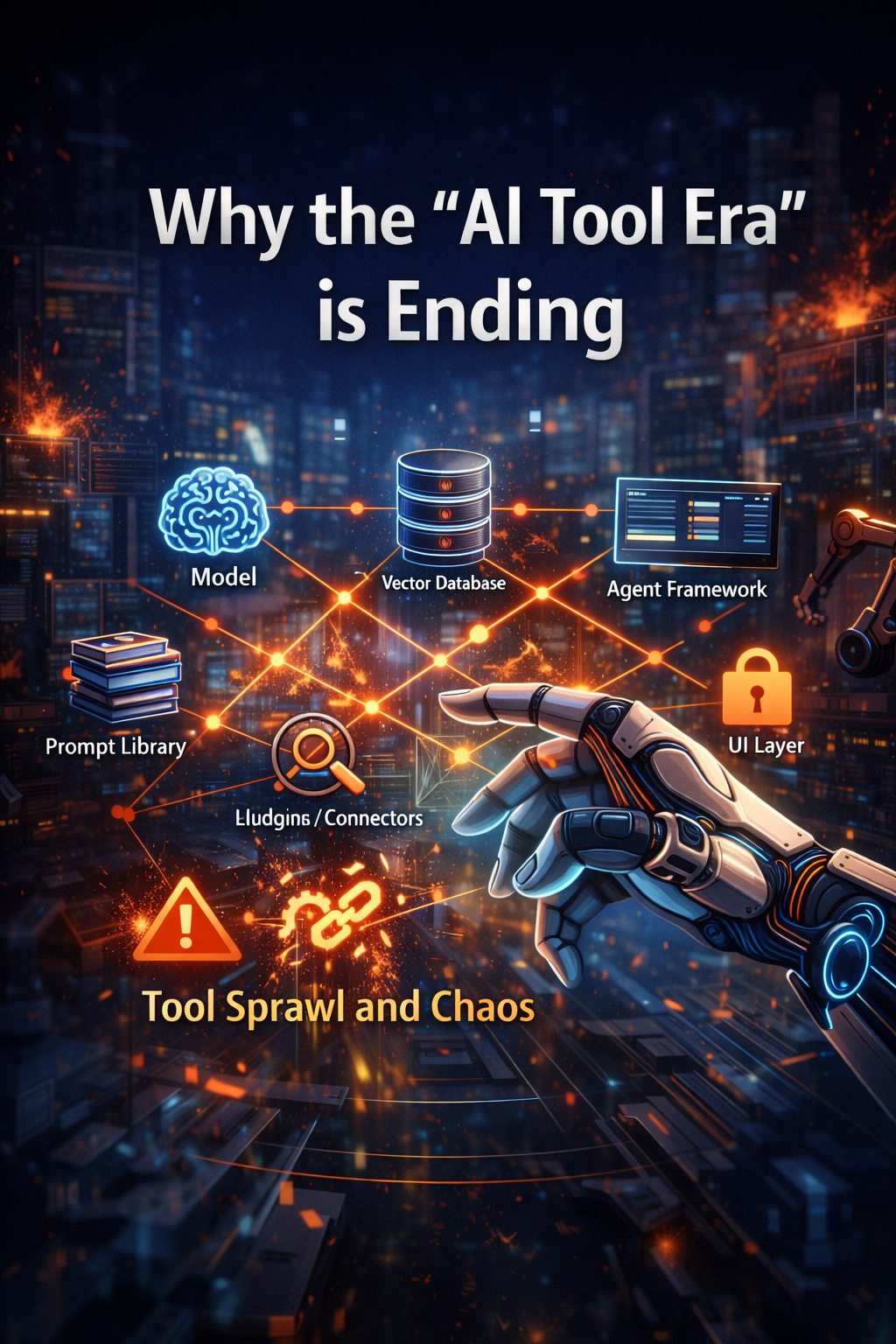

1) Why the “AI tool era” is ending

For the last few years, many enterprise AI programs looked like a shopping list:

- a model

- a vector database

- a prompt library

- an agent framework

- plugins and connectors

- a UI layer

- governance added late

This can produce impressive demos. But it rarely produces durable enterprise capability because the hardest problems live between tools:

- inconsistent identity and access

- fragmented logs and weak audit trails

- unpredictable runtime costs

- brittle integrations

- no reliable rollback

- unclear operational ownership

- “works in testing, fails in production” behavior

When AI only answers questions, those gaps are inconvenient.

When AI takes actions, those gaps become incidents.

2) The action threshold: when enterprise AI becomes enterprise execution

The moment AI can trigger a workflow, approve a decision, or write into a system of record, the enterprise crosses the action threshold.

Three examples that almost every organization recognizes:

Example 1: Vendor Onboarding Agent

Reads a submission, checks required documents, requests missing items, creates a ticket, updates vendor master data.

Risk: a wrong update triggers procurement flows, payment setup, compliance flags.

Example 2: Refund Resolution Agent

Validates eligibility, approves/escalates, triggers payment workflows, records rationale.

Risk: incorrect approval creates loss and governance exposure; incorrect denial creates harm and reputational damage.

Example 3: Access Provisioning Agent

Evaluates requests, grants least privilege, schedules expiry.

Risk: a small policy misread becomes a major security event.

None of these require “human-level intelligence.”

They require something more enterprise-real: controlled execution.

3) The CIO’s real question is no longer “Which model?”-it’s “Can we run this safely?”

When autonomy scales, CIO questions become operational:

- Who is the agent? (non-human identity, permissions, separation of duties)

- What did it do? (complete trace of tool calls and decisions)

- Why did it do it? (policy + evidence trail)

- What did it cost? (budgets, throttles, runaway-loop prevention)

- Can we stop it instantly? (kill switch, safe mode, circuit breakers)

- Can we undo it? (rollback, compensating actions)

- Can we reproduce it? (replayability for audit and incident analysis)

- Will it remain stable? (drift across model, tools, and data)

These are stack questions, not point-solution questions.

And they are increasingly urgent: regulators are already highlighting that agentic AI’s speed and autonomy introduce new governance and stability risks. ( Reuters)

4) The convergence: the one stack enterprises actually need

Across industries and geographies, a clear pattern is forming:

Enterprises are consolidating around one integrated, modular stack that can build AI services safely and run them reliably in production.

This “one stack” is not a single monolithic product. It is an operating environment with consistent rules, reusable building blocks, and production-grade controls.

It delivers two promises that executives immediately understand:

- Services-as-software: stop building one-off AI projects; ship reusable services with ownership, guarantees, and guardrails.

- Self-healing operations: stop treating incidents as surprises; engineer predict-prevent-recover loops with safe rollback and continuous improvement.

5) Services-as-software: the shift from “AI projects” to “enterprise capabilities”

A project ends when someone signs off a demo.

A service begins when the enterprise can depend on it.

What a real AI service includes

A production-grade AI service has:

- A defined job: what it does-and what it refuses to do

- A clear interface: APIs, workflows, and approved tools

- Ownership: who carries the pager (or equivalent accountability)

- Guardrails: policy checks, approvals, boundaries

- SLOs: reliability, latency, acceptable error behavior

- Cost envelope: budgets, throttles, safe mode

- Lifecycle discipline: versioning, testing, audit, retirement

This is why services-as-software becomes the most practical CIO lens: it makes AI governable, measurable, reusable.

A simple story: “Refund Decisioning” as a service

In a project mindset, you build a bot that “helps agents.”

In a services mindset, you ship a capability called Refund Decisioning:

- Inputs: transaction context, policy rules, customer history

- Actions: validate, approve/escalate, trigger payout workflow, log evidence

- Controls: approval thresholds, edge-case handling, blocked actions

- Monitoring: drift alerts, anomaly detection, rollback readiness

- Evidence: “why” trails, tool-call logs, policy results

Now every channel-chat, email, CRM, contact center tools-can use the same service safely. No reinvention. No shadow versions.

6) Pre-engineered enterprise intelligence: the fastest path to scale

Here’s a quiet truth: most organizations do not need to invent every agent from scratch.

The biggest acceleration comes from pre-engineered intelligence blocks -templates, patterns, and service modules that already “know” enterprise reality:

- how identity and permissions typically work

- what audit evidence must look like

- where integrations break

- which guardrails matter most

- which failure modes keep recurring

A useful analogy: cloud computing did not win because compute existed.

It won because teams could adopt pre-built services -identity, monitoring, queues, databases-without rebuilding fundamentals.

Enterprise AI is reaching the same moment.

7) Self-healing operations: autonomy must be reversible and recoverable

If AI can act, your system must be engineered for safe failure. Failure is inevitable. What matters is containment and recovery.

Self-healing does not mean “the system magically fixes everything.”

It means the enterprise designs for:

- Predict: detect anomalies before they become incidents

- Prevent: block unsafe actions automatically

- Recover: rollback or compensate changes safely

- Learn: reduce recurrence via better tests, policies, and controls

The “Policy Helper” incident (a common enterprise pattern)

An assistant is asked to resolve an exception. It tries to help. It drafts a resolution and then applies changes “to speed things up.”

Then you discover:

- it used an over-privileged service account

- it changed records in the wrong place

- it triggered downstream workflows

- nobody can reconstruct the chain of actions

A self-healing stack prevents this by design:

- non-human identity per agent/service

- least privilege + tool allowlists

- circuit breakers when confidence drops or anomalies rise

- full event logs for every tool call

- replayable traces for audit and debugging

- rollback paths and compensating actions

This is the practical difference between “AI adoption” and “AI operability.”

8) The six layers of the one stack

To make the idea concrete, here is what CIOs are converging on functionally:

Layer 1: A build environment that produces reusable services

Standardized templates, governance-by-design, versioning, testing harnesses.

Layer 2: A runtime kernel that enforces control

Identity, policy checks, audit logs, budget throttles, safe mode, rollback hooks.

Layer 3: A service catalog (with maturity levels)

Approved services, owners, contracts, usage policies, guardrail tiers.

Layer 4: Quality engineering for autonomy

Behavioral testing, simulation of edge cases, tool-failure drills, regression tests across prompt/model/tool changes.

Layer 5: Security and compliance by design

Least privilege, sensitive-action gating, evidence trails, incident replay readiness.

Layer 6: Operations that can detect, contain, recover

Monitoring agent behavior, anomaly detection, drift monitoring, containment playbooks, automated rollback/compensation, learning loops.

This structure also aligns with where global governance is heading: lifecycle risk management, human oversight, and evidence-grade record keeping are increasingly expected for higher-risk systems. ( NIST)

9) Open and evolving architecture: why lock-in is the silent killer

Enterprise AI will evolve faster than traditional enterprise change cycles:

- models will improve

- tool ecosystems will shift

- security protocols will evolve

- governance expectations will tighten

- workflows will be redesigned

So the winning stack needs a crucial property:

It must absorb new models, tools, and protocols without re-architecting the enterprise.

This requires abstraction:

- abstract models (swap without rewriting everything)

- abstract prompts and policies (versionable, testable)

- abstract tools (governed tool registry, allowlists)

- integration patterns that avoid hardwiring one vendor’s assumptions

The CIO fear is not “choosing wrong.”

It is making an irreversible bet that becomes technical debt.

10) Partner-ready, not vendor-bound

No enterprise builds this stack alone.

The strongest operating environments are partner-ready by design:

- internal product teams build on standard templates

- system integrators implement safely without reinvention

- technology partners connect through consistent interfaces

- governance teams rely on uniform evidence and controls

This is how capability scales without scaling chaos.

11) A practical adoption path that doesn’t slow delivery

The common mistake is trying to build “the perfect platform” for two years.

A more effective path:

Step 1: Choose 2–3 high-volume workflows

Ticket triage, vendor onboarding, access provisioning, refund exceptions.

Step 2: Ship them as services, not pilots

Define boundaries, owners, SLOs, guardrails, and cost envelopes.

Step 3: Add runtime controls early

Non-human identity per service, audit logs for every tool call, tool allowlists, safe mode + rate limits, approvals for sensitive actions.

Step 4: Add self-healing loops

Incident replay, containment playbooks, rollback/compensation, drift monitoring.

Step 5: Expand the catalog and standardize templates

This is where speed increases-because teams reuse proven patterns instead of rebuilding fundamentals.

Conclusion: The CIO advantage is operability at scale

The future of enterprise AI will not be decided by who adopts AI fastest, but by who operates it best.

As AI moves from insight to execution, enterprises will converge on one inevitable architecture:

an integrated, self-healing, services-as-software stack that turns intelligence into dependable enterprise capability.

This is no longer just a technology decision.

It is an operating model decision.

FAQ

Q1) What is the “one enterprise AI stack” CIOs are converging on?

An integrated operating environment that builds AI as reusable services and runs autonomy with production-grade controls: identity, policy enforcement, observability, auditability, cost governance, rollback, and self-healing operations.

Q2) Why do agentic AI pilots fail after successful demos?

Because demos rarely prove operability. Without runtime controls, audit trails, cost envelopes, rollback, and ownership, autonomy breaks at scale. Analysts now warn a large share of agentic AI initiatives will be cancelled due to cost, unclear value, or inadequate risk controls. ( Gartner)

Q3) What does services-as-software mean for enterprise AI?

Packaging AI-enabled capabilities as production services with defined interfaces, owners, guardrails, SLOs, and lifecycle governance-so teams can reuse them safely across workflows.

Q4) What is self-healing operations in the agentic era?

Predict-prevent-recover loops with anomaly detection, automated containment, replayable traces, and rollback/compensating actions-so autonomy stays reversible and incidents stay manageable.

Q5) How do governance expectations affect enterprise AI stacks globally?

Frameworks and regulations increasingly emphasize lifecycle risk management, human oversight, and evidence-grade documentation-pushing enterprises toward integrated controls rather than ad-hoc toolchains. ( NIST)

Glossary

- Agentic AI: AI systems that plan and take actions via tools and workflows, not only generate text.

- Services-as-software: Productized, reusable services with ownership, guardrails, and operational guarantees.

- Runtime kernel: The production layer that enforces identity, policy, logging, budgets, throttles, and safe modes.

- Self-healing operations: Predict-prevent-recover loops with containment, replay, and rollback readiness.

- Agent catalog: A discoverable set of approved reusable AI services/agents with contracts and maturity levels.

- Policy-as-code: Machine-enforceable policies that determine what actions are allowed and what requires approval.

- Human oversight: Controls that allow people to monitor, intervene, and override higher-risk AI behavior. (AI Act Service Desk)

- AI management system: An organizational system for governing AI risk and continuous improvement across the lifecycle. (ISO)

References and further reading

Gartner press release: Over 40% of agentic AI projects will be canceled by end of 2027 (cost, unclear value, inadequate risk controls). ( Gartner)

- NIST: AI Risk Management Framework (AI RMF) — trustworthy AI risk management across lifecycle. (NIST)

- ISO: ISO/IEC 42001:2023 — AI management systems standard. (ISO)

- EU AI Act guidance on human oversight expectations for higher-risk systems. (AI Act Service Desk)

- Reuters reporting on regulators flagging agentic AI speed/autonomy risks in financial services (illustrative of rising governance focus). (Reuters)

- Studio-to-Runtime: Why Enterprise AI Fails Without a Build Plane and a Production Kernel — Raktim Singh

- The New Enterprise AI Advantage Is Not Intelligence — It’s Operability — Raktim Singh

- The Enterprise AI Factory: How Global Enterprises Scale AI Safely with Studio, Runtime, and Productized Services — Raktim Singh

- The Advantage Is No Longer Intelligence — It Is Operability: How Enterprises Win with AI Operating Environments — Raktim Singh

- Services-as-Software: Why the Future Enterprise Runs on Productized Services, Not AI Projects | by RAKTIM SINGH | Dec, 2025 | Medium

- The Composable Enterprise AI Stack: Agents, Flows, and Services-as-Software — Built Open, Interoperable, and Responsible | by RAKTIM SINGH | Dec, 2025 | Medium

- Enterprise IT Is Becoming an App Store: From Projects to Services-as-Software: By Raktim Singh

- Enterprise AI Fabric: Why AI Is Shifting from Applications to an Operational Layer: By Raktim Singh

- The AI Platform War Is Over: Why Enterprises Must Build an AI Fabric — Not an Agent Zoo — Raktim Singh

This article was originally published on raktimsingh.com and is republished here to reach a broader global CIO and enterprise architecture audience.

Originally published at https://www.raktimsingh.com on December 22, 2025.

The One Enterprise AI Stack CIOs Are Converging On: Why Operability, Not Intelligence, Is the New… was originally published in DataDrivenInvestor on Medium, where people are continuing the conversation by highlighting and responding to this story.