Strip away the press releases, the supercomputer keynotes, and the breathless Davos panels, and what you find underneath AI’s trillion-dollar boom is surprisingly mundane: a small number of companies writing large checks to each other, an electric grid that hasn’t been meaningfully updated since the 1970s, and a growing chorus of enterprise CFOs quietly asking where the return on investment went.

This is a report about friction between the digital and the physical, between the promise and the proof, between the hype and the hardware.

Marketing Math

Gift-Card Economy: How Mega-Rounds Are Built on Cloud Credits

Follow the money closely enough, and you’ll start to see the same dollar bills cycling through the same wallets.

The mechanics are elegant in their circularity:

- Microsoft invests $13+ billion in OpenAI.

- OpenAI spends the majority on Azure, Microsoft’s cloud platform, creating guaranteed, captive demand for Microsoft’s own infrastructure.

- A portion of the original investment comes home before OpenAI’s server racks even warm up.

This is not a fringe critique. It is how the ecosystem openly operates, and as of March 2026, it just got structurally locked in for another decade.

When OpenAI completed its for-profit conversion in February 2026, the new deal’s terms crystallised the arrangement.

Microsoft now holds a 27% stake worth approximately $135 billion in the new OpenAI PBC, and OpenAI is contracted to purchase an incremental $250 billion in Azure services going forward, with Microsoft no longer even requiring right-of-first-refusal to be OpenAI’s compute provider.

OpenAI is effectively a captive customer embedded in its own cap table.

As DataCenterDynamics noted after reviewing the deal terms, the partnership “refines and adds new provisions,” but the Azure pipeline flows harder than ever.

“Microsoft has basically been running a scam with OpenAI: they invest $10 billion, but it’s in the form of Azure credits, which OpenAI then cashes in and Microsoft books as $10 billion in revenue.”

— Naked Capitalism analysis of the Microsoft–OpenAI restructuring · January 7, 2026

The inference spending numbers, first reported by Ed Zitron’s newsletter in December 2025 from documents he reviewed, are staggering in what they reveal.

OpenAI spent $5.02 billion on Azure inference alone in just the first half of CY2025, more than it earned externally in all of 2024 ($3.76B revenue). By the end of Q3 2025, that Azure inference bill had reached $8.67 billion. The full CY2024–Q3 2025 total: $12.43 billion.

“OpenAI’s inference costs have easily eclipsed its revenues,” Zitron wrote in a piece that was not contradicted by either Microsoft or OpenAI.

This loop extends well beyond the OpenAI-Microsoft axis. Bloomberg’s January 2026 graphic investigation documented the full web:

- NVIDIA announced a $100 billion investment in OpenAI, paid in GPUs destined for OpenAI’s data centers, guaranteeing NVIDIA’s own chips stay scarce and highly priced.

- Amazon has held talks to invest at least $10 billion in OpenAI, alongside a commercial relationship where OpenAI taps Amazon for compute.

- Anthropic received $8 billion from Amazon and designated AWS as its primary cloud provider; Anthropic is now also contractually purchasing $30 billion in Azure compute.

As TechCrunch’s February 2026 infrastructure deep-dive noted of the Nvidia-GPU arrangement: “If that seems circular, it’s because it is.”

The most recent data point underscores the structural scale.

According to Constellation Research’s analysis of Microsoft’s Q2 FY2026 earnings, OpenAI now represents 45% of Microsoft’s entire remaining performance obligations: $625 billion in total, up 110% year-over-year.

Anthropic accounts for much of the balance. Microsoft’s cloud business is, in a meaningful sense, a derivatives play on whether AI lab spending continues to accelerate.

When an infrastructure company’s biggest customers are also its biggest investors, the standard financial vocabulary for separating “revenue” from “capital recycling” stops doing useful work.

Bloomberg’s January 2026 investigation concluded that the trend “looks poised to continue,” with Amazon holding talks on a further $10B OpenAI investment.

The FTC and EU are now both investigating Microsoft’s “history of circular investments in AI startups” though no enforcement action has yet followed.

Physical Limits

The Grid Crisis: When the Bubble Hits a Wall Built in 1972

You can raise as much venture capital as you want, but you cannot run a data center on a PowerPoint deck.

The most concrete, underreported, and structurally significant constraint on AI’s growth trajectory is the one that predates ChatGPT by about fifty years: the United States electric grid.

This is no longer a theoretical concern; it is the operational reality reshaping where data centers get built, how fast AI can scale, and who pays for the privilege.

Clift Pompee, VP of power and emissions at Compass Datacenters, stated in DataCenterKnowledge’s 2026 industry outlook that “most of the grid was built between the 1950s and 1970s, and today, approximately 70% of the grid is approaching the end of its life cycle.”

Carnegie Mellon University’s dispatch on AI energy research confirmed that the US Department of Energy projects data center electricity demand will double or triple in the next few years, potentially reaching 12% of total US electricity consumption by 2028, up from under 4% today.

The epicentre of the crisis is Northern Virginia.

In a WebProNews investigation, Loudoun County, which handles an estimated 70% of the world’s internet traffic, is now facing interconnection delays of four to seven years for new data center projects.

Dominion Energy has explicitly “warned that it cannot build new generation and transmission infrastructure fast enough to keep pace.”

A single modern AI data center in the region can consume as much power as 100,000 homes.

The political fallout is real: Virginia’s 2026 legislative session has produced multiple bills to force data center operators to fund their own dedicated generation assets before receiving grid interconnection approval.

“I think it’s almost inevitable, the way that these structures are set up, that ordinary people are going to end up subsidizing the wealthiest industry in the world.”

— Cathy Kunkel, energy analyst, NPR Planet Money · January 2, 2026

The PJM Interconnection, the largest US grid operator, serving 67 million people across 13 eastern states, has formally elevated “large loads” to a board-level priority with accelerated stakeholder processes.

According to CNBC’s analysis of the energy pricing debate, the EIA confirmed that “retail electricity prices have increased faster than the rate of inflation since 2022, and we expect them to continue increasing through 2026.” PJM’s Base Residual Auction mechanism means consumers are effectively pre-paying two years in advance for the electricity that AI companies will consume, a cost socialisation arrangement that makes the retired schoolteacher in Richmond a de facto subsidiser of Meta’s training clusters in Ashburn.

The corporate response has been revealing.

Bismarck Analysis, writing in late December 2025, noted that the entire US data center sector currently draws under 15 gigawatts of power, but the pipeline of new construction would, if fully built, require the US grid to add nearly 20% more peak capacity (from 760 GW today).

Oracle is building Stargate data centers in Abilene, Texas, with on-site natural gas generation specifically to avoid grid dependency.

xAI built its own hybrid power plant in Memphis. When a $500 billion infrastructure project needs to bring its own power station, the grid itself has become a limiting reagent, not a utility.

What’s happening in Loudoun County today is the national playbook arriving in sequence

Dominion Energy’s integrated resource plan, per the WebProNews March 2026 investigation, shows data centers account for “the vast majority of new electricity demand” in its territory, with residential and commercial load essentially flat.

Virginia’s current regulatory framework spreads the cost of new generation and transmission across all ratepayers, not the customers driving the demand.

OpenAI’s next-generation systems, by the company’s own projections, will require computing clusters that consume gigawatts, quantities previously associated with entire metropolitan areas.

The demand curve is not flattening.

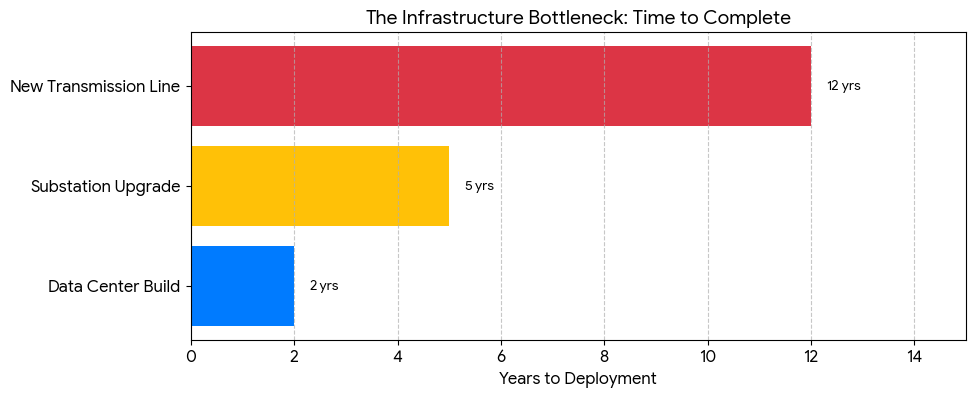

And as Drexel University assistant professor Ali Hasan noted in a March Q&A: “Power plants and transmission projects can take a decade or more to complete, while data centers can be deployed much faster.”

This asymmetry is the core crisis.

The Indian Pivot

From SaaS Factory to Sovereign Stack: India’s ₹10,372 Crore Bet

For most of the 2010s, the dominant narrative around Indian tech was a simple one: build something in Bengaluru, sell it to a US SaaS buyer, exit via acquisition.

The ecosystem was a supplier, not a creator.

That playbook is being loudly, publicly rewritten, and as of mid-March 2026, with more substance behind it than most Western observers have acknowledged.

The pivot point was precise.

In January 2025, ten days after DeepSeek-R1 demonstrated that frontier-class AI could be built outside the US hyperscaler ecosystem at a fraction of the assumed cost, India’s government moved fast.

The IndiaAI Mission, a programme passed by Cabinet in March 2024 with an initial ₹10,000 crore allocation, received an additional top-up in the 2026–27 Union Budget, bringing its total to ₹10,372 crore.

The mission empanelled over 38,000 GPUs across 14 service providers in data centres in Mumbai, Hyderabad, Bengaluru, Noida, and Jamnagar, treating compute as “a public good for inclusive AI growth.”

Expansion plans will add 20,000 more GPUs.

The flagship moment came in February 2026 at the India AI Impact Summit in New Delhi.

Bengaluru-based Sarvam AI unveiled two models built from scratch with IndiaAI Mission compute resources: a 30-billion-parameter model for real-time conversational use and a 105-billion-parameter flagship, trained by a core team of approximately 15 researchers using 4,096 Nvidia H100 SXM GPUs provisioned through Yotta Data Services.

BusinessToday’s cover story reported that the 105B model “reportedly outperforms global counterparts in Indian language code-switching” and that co-founder Pratyush Kumar said plainly: “We’ve reached an inflection point. We’ve trained a model that is competitive.”

“Sovereignty matters much more in AI than building the biggest models.”

— Vivek Raghavan, co-founder, Sarvam AI, at India AI Impact Summit · February 2026

Five companies, Sarvam AI, Gnani.ai, BharatGen, Fractal Analytics, and Tech Mahindra, announced sovereign models at the Summit.

BharatGen, a non-profit consortium led by IIT Bombay with nine other leading Indian institutions, launched Param 2, a 17-billion-parameter model covering 22 Indian languages, with open documentation and Hugging Face repositories.

BharatGen received ₹989 crore from the IndiaAI Mission, the largest single tranche awarded.

India’s position in Stanford’s Global AI Vibrancy Tool has climbed from seventh to the top three globally between 2023 and 2025.

But the story is not without its complications.

The “sovereign AI” label, like most nationalist technology narratives, contains a considerable amount of marketing.

Jaspreet Bindra, founder of AI & Beyond, cautioned in BusinessToday that “developing models of this scale, with relatively low cost and limited GPUs, is a significant achievement”, but explicitly warned against “directly comparing Indian models with global leaders such as GPT-4, Gemini, Claude, or DeepSeek purely on size or completeness.”

The harder questions Bharatgen.com’s own March 2026 analysis identified:

- How open will these models actually be?

- Can they sustain themselves economically against heavily capitalised global players?

- Whether training on Nvidia H100s requires ongoing US export licence compliance.

Why “sovereign AI” is only as sovereign as its Nvidia licence allows

Sarvam’s 105B model was trained on Nvidia H100 SXM GPUs.

Krutrim’s ambition to build the Bodhi-1 domestic AI accelerator chip remains a work in progress.

Meanwhile, in the Indian AI startup landscape, the “current market is dominated by full-stack players who control their own compute infrastructure”, but that infrastructure still runs on foreign silicon.

The Union Budget’s long-term tax holidays for data centre and cloud investments will accelerate build-out.

But the foundational dependency, on Nvidia chips designed in California, manufactured in Taiwan, subject to US export controls, is not yet solved by any policy tool available to the Government of India.

Sovereignty of deployment is not the same as sovereignty of the supply chain.

The Talent Vacuum

Acqui-Hire Industrial Complex: $40B+ to Buy What You Can’t Build

There is a resource scarcity problem at the heart of the AI boom that no amount of capital can immediately solve: the number of people who genuinely understand how to build frontier AI systems is vanishingly small.

The combined demand from OpenAI, Google DeepMind, Meta AI, Anthropic, xAI, Microsoft Research, and dozens of well-funded startups exceeds that pool many times over.

The result is a talent market so distorted it has produced its own genre of corporate transaction: the acqui-hire.

The mechanics are simple.

A startup employs a team of rare specialists who have built not just individual expertise but shared knowledge, intuition, and working chemistry.

Buying the company costs more upfront but delivers the intact team within weeks.

Microsoft integrated Inflection AI’s entire research team “within weeks” after a $650 million transaction.

Google’s $2.7 billion arrangement with Character.AI was primarily about repatriating Noam Shazeer, a co-author of the transformer architecture who had left Google to found the company.

OpenAI completed nine acquisitions in a single year; in nearly every case, the product was wound down, and the team joined.

“When Microsoft handed over $650M to Inflection, they weren’t after its chatbot; they wanted Mustafa Suleyman and his AI research team.”

— Founders Forum analysis · September 2025

When OpenAI acquired the team behind Convogo, an executive coaching tool, announced January 8, 2026, the company explicitly confirmed it was not acquiring the IP or technology.

The value was the people.

OpenAI separately poached at least seven engineers from Cline, a coding startup, in a hiring spree that “effectively gutted a significant portion” of the company’s technical team without any transaction occurring at all.

The deeper question is whether this constitutes innovation or expensive talent consolidation that concentrates capability inside incumbents while starving the broader ecosystem.

When researchers who might build the next breakthrough are systematically absorbed by the companies that control the current frontier, the long-term innovation pipeline narrows even as short-term capability accelerates.

Why acqui-hires are structured to avoid merger review, and why it matters in 2026

Traditional mergers attract antitrust attention when they combine market share, assets, or customer relationships.

Acqui-hires are structurally designed to avoid this: the product is wound down (no customer transfer), the IP is often not conveyed (no classic asset acquisition), and the transaction is structured as “licensing and hiring” rather than a purchase.

Microsoft’s Inflection deal, Google’s Character.AI arrangement, and OpenAI’s team acquisitions all used variations of this structure specifically to reduce regulatory surface area.

The FTC under Lina Khan moved to scrutinise several of these deals but did not block them.

Financial Content’s analysis notes the FTC and EU continue to investigate Microsoft’s “circular investments” — but with no enforcement action yet arrived, the acqui-hire market has reached record deal volumes.

The Reckoning

2026: The Year the Bill Comes Due

The most uncomfortable conversation happening in corporate boardrooms right now is a simple one: we have spent three years and hundreds of billions of dollars on AI, and the CFO cannot find it on the income statement.

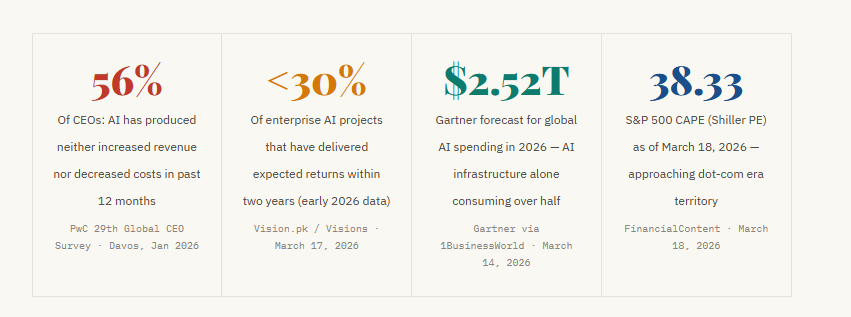

According to PwC’s 29th Global CEO Survey, released at Davos in January 2026, 56% of chief executives report that AI has produced neither increased revenue nor decreased costs over the past twelve months. Only 12%, PwC’s “AI Vanguard”, have achieved both.

Early 2026 data gathered by Visions suggests that less than 30% of enterprise AI projects have delivered expected returns within two years.

This is the Compute Tax in operation.

Fortune reported in December 2025 that companies are spending between $590 and $1,400 per employee annually just on AI tools, before accounting for GPU time, data engineering, model fine-tuning, and integration.

Kyndryl’s research across 3,700 executives found that 61% of CEOs are under increasing pressure to show returns on AI investments, up significantly year over year.

Gartner’s latest forecast, cited by 1BusinessWorld, places global AI spending at $2.52 trillion for 2026, a 44% jump from the prior year, with AI infrastructure alone consuming more than half.

The era of “experimental budgets” is ending. As Kyndryl’s Michael Bradshaw told Fortune: “Most firms are not positioned to [achieve ROI in 2026]. That would be my prediction.”

“While 2025 rewarded ‘AI mentions’ in earnings calls, 2026 is strictly demanding ‘AI margins.’”

— Bank of America analysts · via FinancialContent, March 18, 2026

The S&P 500 is sitting between 6,700 and 7,000, retreating from early January peaks, with a CAPE ratio of 38.33.

FinancialContent’s analysis notes this remains below the dot-com era’s 44.0 peak, but that the market has entered a “show me the margins” mandate.

The current trailing P/E of 26.5–28.5x against a 10-year average of 18.9x is what FinancialContent labels “ROI Fatigue” territory.

Bank of America analysts’ observation that 2025 rewarded “AI mentions” while 2026 demands “AI margins” is not a prediction. It is a live market signal.

The macroeconomic backdrop makes this worse, not better.

The Bureau of Economic Analysis revised US Q4 2025 GDP down to 0.7% annualized, half the initial estimate, and a sharp deceleration from 4.4% in Q3.

Consumer spending rose only 2.0%.

A 43-day partial government shutdown subtracted approximately one percentage point from the quarter. And Brent crude, per 1BusinessWorld’s March 14 analysis, closed at $103.14, after surging roughly 50% following US-Israel joint strikes on Iran that disrupted roughly 20% of global oil supply.

Every dollar of AI capex that doesn’t produce a measurable return is now worth less than it was at any point since this boom began.

Forrester’s chief research officer, Sharyn Leaver, stated plainly in a 2026 report: “In 2026, the AI hype period ends as the pressure to deliver real, measurable results from secure AI initiatives intensifies.”

Medium contributor Yash Batra, writing in February 2026, summarised the structural problem:

“Between 2023 and 2025, capital moved faster than proof. Venture funding poured more than $180 billion into AI-labelled startups globally.”

The reckoning is not a forecast. It is the present tense.

The 2026 Thesis

2026 is not the year AI dies. It is the year the market stops paying for possibility and starts demanding proof. The World Economic Forum’s January 2026 analysis of a potential AI bubble burst concluded the fallout would be “primarily a financial market and media event” with limited immediate macroeconomic consequence — but also warned that “smaller banks that have lent to AI companies” would face heightened risk. What separates the survivors from the casualties is not better models; it is repeatable unit economics, measurable workflow impact, and the ability to translate “productivity gains” into numbers a CFO can take to a board meeting without apology.

The Survivors

Foundational vs. Wrapper: Who Lives When the VC Money Dries Up

FinancialContent market analysis articulated the distinction with unusual clarity: the market has begun to separate the “AI Factories” from the “AI Tourists.”

NVIDIA remains the primary beneficiary of the infrastructure buildout with expected 31.1% YoY earnings growth for the technology sector in 2026.

Meta Platforms has continued to gain favour as it leverages AI to drive ad-targeting efficiencies that directly impact the bottom line.

Conversely, Microsoft and Alphabet face “intense scrutiny” as they spend over $200 billion annually on AI infrastructure, while enterprise adoption “remains largely in the early stages.”

Yishan Wong, former Reddit CEO, articulated the structural thesis in November 2025 in terms that Medium’s January 2026 reckoning analysis called “proving prophetic”: “Every AI application startup is likely to be crushed by the rapid expansion of the foundational model providers. Unlike past tech waves, PC, internet, mobile, and AI’s foundational tech haven’t stabilized. Sea changes happen every 9–12 months, obsoleting apps before they scale.”

The Medium analysis noted: “Single-purpose AI agents for tasks like email or scheduling are being commoditized overnight by foundational model updates from big players.”

Sify’s January 2026 “Great AI Correction” analysis made the distinction between the correction and a collapse: “The AI-washers, the companies that slap AI into everything, will fall by the wayside.

And the money that flowed easily, like the 70% VC fund in early 2025 going to AI, those will face forensic scrutiny in 2026.”

Visions’ , analysis agreed: “The three most credible signals are circular financing, sky-high P/E ratios in the semiconductor sector, and lack of measurable ROI from enterprise AI deployments.”

The wrapper category satisfies none of the survival criteria.

The foundational layer, by contrast, is sitting on physical assets, power contracts, proprietary data, irreplaceable talent, that accrue value regardless of whether the current hype cycle sustains or corrects.

Conclusion

The Friction Is the Feature

The AI industry’s fundamental tension, between digital ambition and physical constraint, between headline valuations and underlying economics, between the promise of transformation and the reality of slow enterprise adoption, is not a bug in the story.

It is the story.

The evidence across all six dimensions points in the same direction: the correction has begun, it is uneven, and the physical constraints are the last constraints to resolve.

The questions worth tracking through the remainder of 2026 are the ones the press conferences don’t answer:

- How much of the reported AI revenue is recycled investment capital versus external organic demand?

- Which data center projects will actually get built versus which will remain in a pipeline that exists primarily on paper?

- How long can enterprise AI budgets absorb negative ROI before boards force consolidation?

- And who, when the music stops, will be left holding actual assets, power contracts, proprietary data, irreplaceable talent versus beautiful interfaces built on borrowed infrastructure and borrowed time?

Bottom Line

AI is real.

The technology works.

The use cases exist.

But the financial structure built around it, circular capital, inflated valuations, wrapper businesses with no moat, is increasingly unstable.

The power grid, the enterprise ROI gap, and the talent bottleneck are not temporary friction points to be engineered around.

They are the physical reality that the market must eventually price correctly.

That pricing event, as of this writing, is not coming.

It has arrived.

A Bit About Me

I’m Dilpreet Grover.

I’m someone who loves exploring ideas. through code, design, and whatever medium feels right that day. I spend most of my time building things that make life a bit simpler or spark curiosity, often blending structure with imagination.

If you’d like to connect or check out some of my work, feel free to visit my website.

Until next time,

Adios!

Sources & Reference

- Bloomberg — “AI Circular Deals: How Microsoft, OpenAI and Nvidia Keep Paying Each Other” · bloomberg.com

- OpenAI — “The next chapter of the Microsoft–OpenAI partnership” (PBC conversion terms, $250B Azure contract) · openai.com

- DataCenterDynamics — “OpenAI completes for-profit move, Microsoft given 27% stake and $250bn Azure contract” · datacenterdynamics.com

- wheresyoured.at — “OpenAI’s $12.43B Inference Spend and Revenue Share” (documents reviewed) · wheresyoured.at

- Constellation Research — “Microsoft Q2 strong, Azure 39%, OpenAI is 45% of RPO” · constellationr.com

- FinancialContent — “The AI Infrastructure Titan: Comprehensive Research on Microsoft (MSFT)” · financialcontent.com

- TechCrunch — “The billion-dollar infrastructure deals powering the AI boom” · techcrunch.com

- GTM360 Blog — “AI Didn’t Invent Circular Deals” · gtm360.com

- Carnegie Mellon University — “How CMU Is Curbing Energy Demands From AI Data Centers” · cmu.edu

- WebProNews — “Northern Virginia’s Power Crisis Is Now America’s Problem: How Data Centers Broke the Grid” · webpronews.com

- CNBC — “Who is really footing the AI energy bill? Inside the debate about data center electricity costs” · cnbc.com

- Common Dreams — “US Electric Grid Heading Toward ‘Crisis’ Thanks to AI Data Centers” · commondreams.org

- DataCenterKnowledge — “2026 Predictions: AI Sparks Data Center Power Revolution” (incl. Compass Datacenters VP quote) · datacenterknowledge.com

- NPR Planet Money — “AI data centers use a lot of electricity. How it could affect your power bill.” · npr.org

- Drexel University — “Q+A: Can AI Save the US Energy Grid From AI Data Centers?” · newsblog.drexel.edu

- BusinessToday — “The Sarvam Moment: How India’s Homegrown AI Startup Is Challenging ChatGPT, Gemini” · businesstoday.in

- Indian Defence News — “IndiaAI Mission Accelerates Indigenous AI Innovation” (Budget figures, GPU pool) · indiandefensenews.in

- BharatGen.com — “From LLMs to Verticalisation: India Sovereign AI Stack Takes Shape in 2026” · bharatgen.com

- 1BusinessWorld — “The Great AI ROI Reckoning” (PwC survey, Gartner $2.52T, macro data) · 1businessworld.com

- FinancialContent — “The AI Reckoning: S&P 500 Battles ROI Fatigue Amid Historic Valuations” (CAPE 38.33, BofA quote) · financialcontent.com

- Fortune — “The big AI New Year’s resolution for businesses in 2026: ROI” (Kyndryl 3,700 exec survey) · fortune.com

- [TechTarget — “An AI bubble burst? Early warning signs” (Forrester prediction; Bittner wave analysis) · techtarget.com

- Medium / Iram Ahmed — “The AI Reckoning: Why the Bubble is Bursting in 2026” (Yishan Wong thesis; wrapper commoditization) · medium.com

- Sify — “The Great AI Correction of 2026: Why the ‘Bubble’ Popping Could be Growing Pains” · sify.com

- WEF — “Anatomy of an AI reckoning” (bubble burst sequence, bank contagion risk) · weforum.org

- Visions / Vision.pk — “AI Bubble 2026: 7 Brutal Truths” (enterprise ROI; circular financing signals) · vision.pk

The AI Mirage: Power Grids, Paper Billions, and the Global Reality Check. was originally published in Level Up Coding on Medium, where people are continuing the conversation by highlighting and responding to this story.