OpenAI Claims Safety 'Red Lines' in Pentagon Deal—But Users Aren't Buying It

The Pentagon deal sparked a mass exodus from ChatGPT—and pushed Anthropic's Claude to the top of the App Store. But the bigger story is in the contract language.

By Jose Antonio LanzEdited by Andrew HaywardMar 2, 2026Mar 2, 20267 min read

By Jose Antonio LanzEdited by Andrew HaywardMar 2, 2026Mar 2, 20267 min read

In brief

- OpenAI signed an agreement with the Pentagon to deploy AI in classified environments.

- The firm said it imposed “red lines,” but the contract allows “all lawful purposes,” a standard that ultimately depends on the government’s own interpretation.

- The controversy sparked the QuitGPT movement and drove a surge in Claude downloads.

OpenAI said this weekend that it reached an agreement with the Pentagon to deploy advanced AI systems in classified environments, marking a significant expansion of the company’s work with the U.S. military.

The announcement came less than 24 hours after the Trump administration blacklisted Anthropic, designating the rival AI firm a “supply chain risk to national security” following a dispute over contract language related to surveillance and autonomous weapons.

President Donald Trump also directed federal agencies to immediately cease using Anthropic’s technology, with Treasury Secretary Scott Bessent writing Monday on X that the agency “is terminating all use of Anthropic products, including the use of its Claude platform, within our department.”

"THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS! That decision belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military.

The Leftwing nut jobs at Anthropic… pic.twitter.com/aIEx92nnyx

— The White House (@WhiteHouse) February 27, 2026

The timing of the AI announcements placed OpenAI’s deal under intense scrutiny. In a detailed blog post, the company outlined what it described as firm “red lines” and layered safeguards governing its Pentagon partnership.

The agreement, as presented by OpenAI, raises broader questions about how AI systems will be governed in national security settings, and how the company’s stated restrictions will be interpreted and enforced in practice.

When “lawful” isn’t enough

OpenAI's blog post opens with three commitments framed as non-negotiable: no use of its technology for mass domestic surveillance, to independently direct autonomous weapons systems, or for high-stakes automated decisions like social credit scoring.

Then comes the actual contract language—which OpenAI notably calls "the relevant language," not "the full agreement."

"The Department of War may use the AI system for all lawful purposes, consistent with applicable law, operational requirements, and well-established safety and oversight protocols," OpenAI said.

That is the exact phrase Anthropic said the government had been demanding throughout negotiations. The exact phrase that Anthropic refused to go along with. OpenAI signed it, yet argues its red lines remain fully intact.

However, “lawful" in national security contexts isn't a fixed boundary—it lives inside a patchwork of statutes, executive orders, internal directives, and often classified legal interpretations. When a contract grants "all lawful purposes," the practical limit becomes the government's current legal envelope, not an independent standard set by the vendor.

A cluster of clauses

The weapons provision reads that the AI system "will not be used to independently direct autonomous weapons in any case where law, regulation, or department policy requires human control."

The prohibition only applies where some other authority already requires human control—it borrows its teeth entirely from existing policy, specifically DoD Directive 3000.09. That directive requires autonomous systems to allow commanders to exercise "appropriate levels of human judgment over the use of force."

And “appropriate” is as subjective as can be.

Human judgment is not human control. This distinction was not accidental. Defense scholars have noted that omitting "human-in-the-loop" language was deliberate, precisely to preserve operational flexibility.

OpenAI's strongest counterargument is its cloud-only deployment architecture—fully autonomous lethal decision loops would require edge deployment on battlefield devices, which this contract doesn't permit. That's a real technical constraint.

But cloud-based AI can still perform target identification, pattern-of-life analysis, and mission planning. Those are kill-chain activities regardless of where the final trigger sits. The outcome for a target doesn't differ based on which server the model runs on.

The surveillance clause follows a similar pattern. OpenAI's stated red line: no mass domestic surveillance. The contract language: The system "shall not be used for unconstrained monitoring of U.S. persons' private information as consistent with these authorities"—then lists the Fourth Amendment, FISA, and Executive Order 12333.

The word "unconstrained" implies a constrained version of mass surveillance would be permissible. And EO 12333 is the executive order the NSA has used to justify intercepting Americans' communications when done outside U.S. borders.

And this is where Anthropic's concerns about wording throughout the negotiations becomes noticeable. Anthropic’s argument was that current law hasn't caught up with what AI makes possible. The government can legally purchase vast amounts of aggregated commercial data about Americans without a warrant—and has already done so.

OpenAI's contract language, by anchoring its protections to existing legal frameworks, may not close the gap Anthropic was actually worried about.

Altman responds

On Saturday night, Altman held an AMA responding to thousands of questions about the deal. When asked what would cause OpenAI to walk away from a government partnership, he answered: "If we were asked to do something unconstitutional or illegal, we will walk away."

If we were asked to do something unconstitutional or illegal, we will walk away. Please come visit me in jail if necessary.

— Sam Altman (@sama) March 1, 2026

That framing places OpenAI's limit at legality—not at an independent ethical judgment about what the company will or won't enable if it happens to be legal, which is what Anthropic defends. Asked whether he worried about future disputes over what counts as "legal," he acknowledged the risk: "If we have to take on that fight we will, but it clearly exposes us to some risk."

On why OpenAI reached a deal where Anthropic could not, Altman offered this: "Anthropic seemed more focused on specific prohibitions in the contract, rather than citing applicable laws, which we felt comfortable with. I'd clearly rather rely on technical safeguards if I only had to pick one. I think Anthropic may have wanted more operational control than we did."

That's a substantive philosophical difference. Anthropic argued that because frontier models can be repurposed for intelligence and military workflows in ways that are hard to anticipate, the limits need to be explicit and binding in writing, even at the cost of the deal. OpenAI's position is that technical architecture, embedded personnel, and existing law together constitute a stronger safeguard than contractual text alone.

The public picked a side

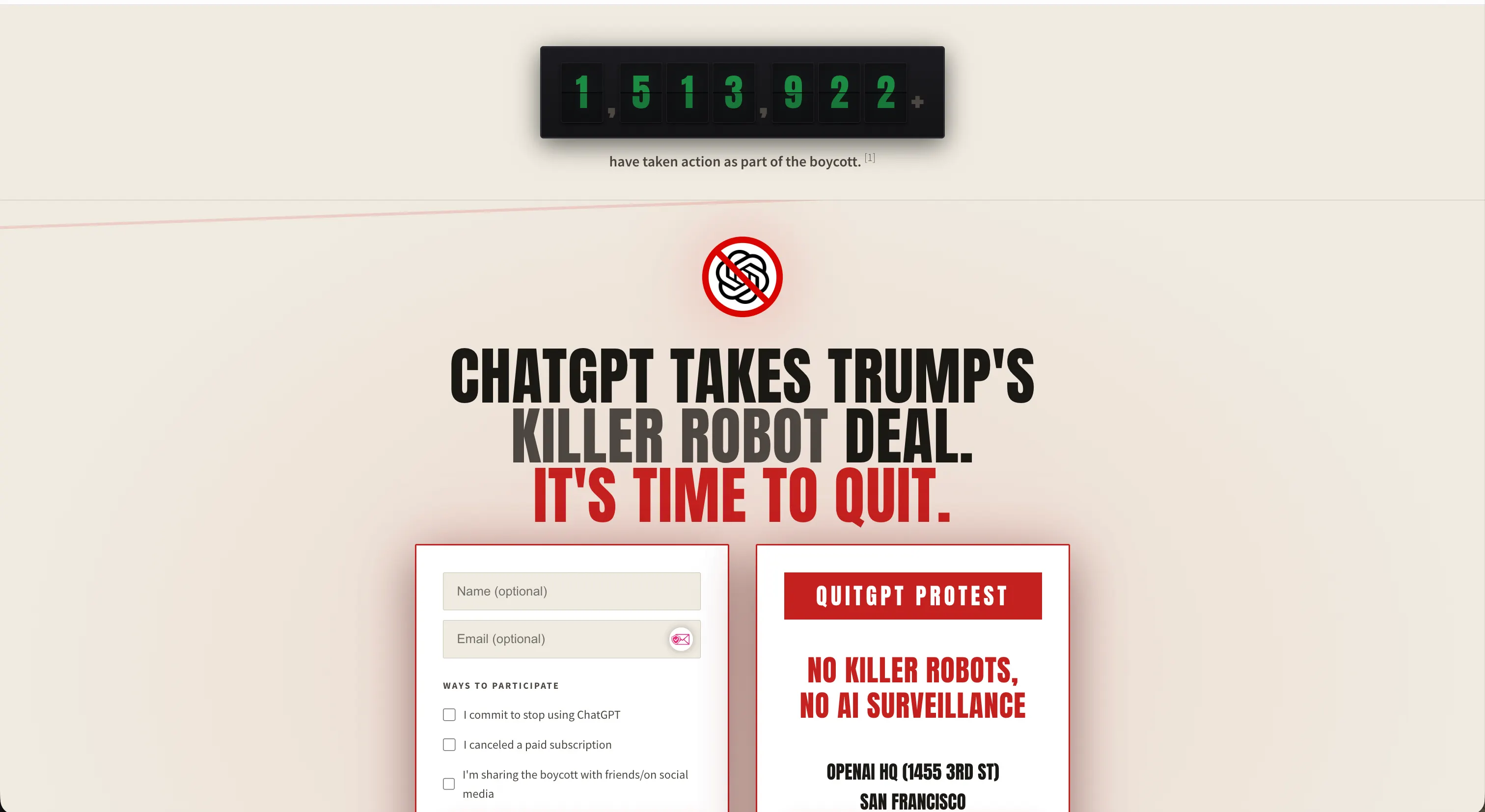

The backlash was immediate. By Monday, the “QuitGPT” movement claimed that over 1.5 million people had taken action—canceling subscriptions, sharing boycott posts, or signing up at quitgpt.org.

The campaign framed OpenAI's move as prioritizing military contracts over user safety, accusing the company of agreeing to let the Pentagon use its technology for "any lawful purpose, including killer robots and mass surveillance."

OpenAI might contest that characterization. But the market moved regardless.

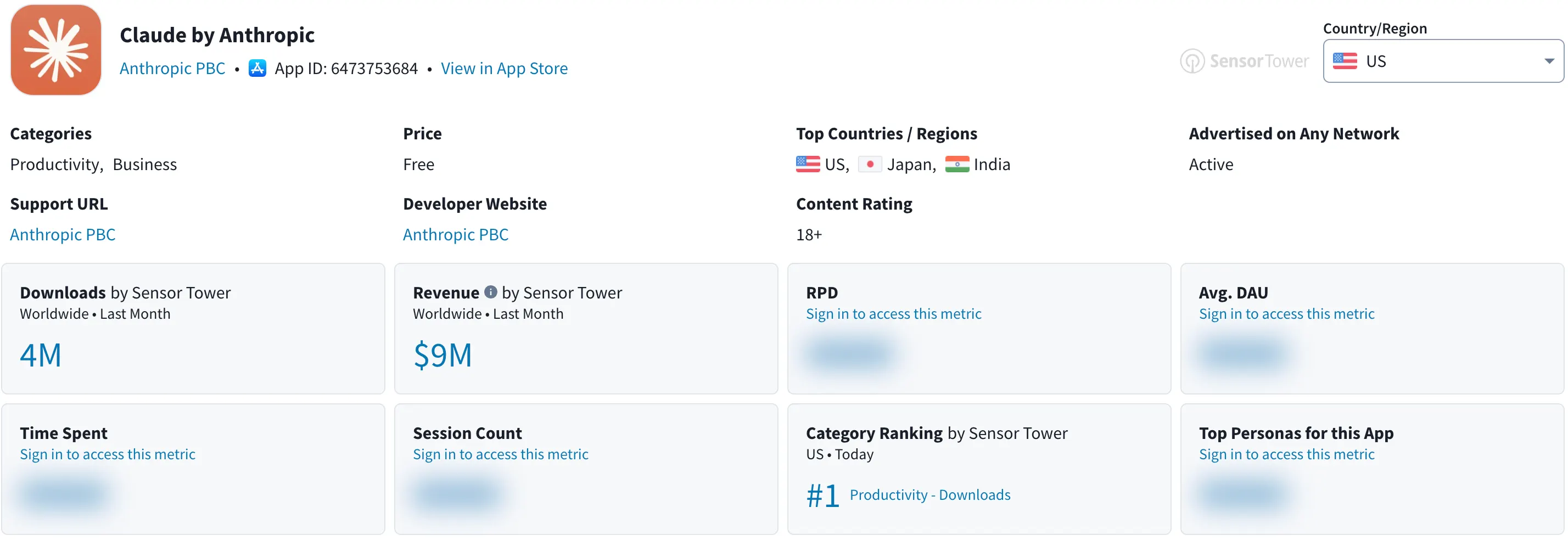

Anthropic's Claude surged past ChatGPT to become the most downloaded free app in the United States on Apple's App Store, with the company telling Decrypt that it saw record daily signups over the weekend.

Pop star Katy Perry shared a screenshot of Claude's pricing page on X. Hundreds of users documented their subscription cancellations publicly on Reddit. Graffiti praising Anthropic appeared outside its San Francisco offices, while chalk attacks covered OpenAI's sidewalks. Even hundreds of OpenAI's own employees had previously signed an open letter supporting Anthropic's refusal to accede to Pentagon demands.

The QuitGPT framing is emotionally compelling, but not entirely precise. Anthropic itself has a partnership with Palantir and Amazon Web Services that grants U.S. intelligence agencies and defense departments access to Claude models, and has allegedly been used in military operations to overthrow the governments of Venezuela and Iran. The ethics of AI and national security contracting were never clean on either side.

What the campaign captured, accurately, is that a large segment of users believed there was a meaningful difference between how the two companies drew their limits—and voted with their subscriptions.

Whether that difference is as meaningful as it appears requires reading the contract carefully.